With all of the advancements in computing technology, it can be hard to keep current. The jargon thrown around by sales associates when purchasing a new device seldom helps and it seems that those who are pushing you toward a specific computer don't understand how 64-bit computing works or benefits the user beyond the ability to access more RAM.

In this article, we will look at how the operating system and application software chosen to run on a 64-bit system, as well as a few features of the processor itself, affect the overall performance of the computer.

Processor and Operating System

Nearly all PCs on the market today are shipping with 64-bit processors and most of those will already have a 64-bit version of Windows preinstalled on it. This pairing is critical when you want to get the best performance out of your system. Although you can install a 32-bit operating system on a 64-bit computer, you would miss out on the additional benefits of the hardware.

When you install a 32-bit operating system on a 64-bit computer, the effect is an instant conversion of a 64-bit processor to a 32-bit processor.

- All of the processor-level instructions are limited to using 32-bit registers, hence all native math functions are similarly limited regarding their range and precision.

- The amount of physical memory that can be accessed drops to 4 GB, even if there is more memory installed.

- Hardware memory such as video memory will consume a portion of the addressable memory instead of being moved above it or having RAM remapped around the hardware memory addresses.

- All other software you wish to run on the system must be 32-bit and will be constrained by these same parameters.

That is the best reason to ensure you are using a 64-bit operating system on any computer that has a 64-bit processor.

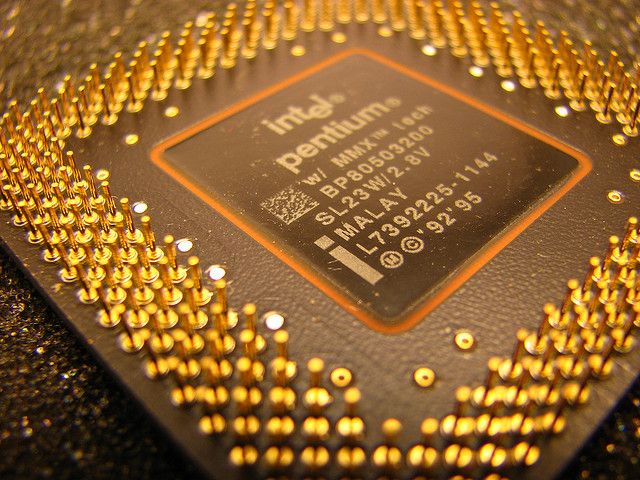

Processor Capabilities

Besides pairing an appropriate operating system with the hardware, elements of the CPU design are affecting system performance. The number of cores available to do the processing is one of the big ones.

From Single to Multiple Cores

About two decades ago, almost all computers marketed to consumers used processors with a single processing core in the package. With this type of design, it meant that the computer could only execute a single instruction at a time and the operating system could only assign a single execution thread to the processor at a time.

Today, a single silicon wafer may have 2, 4, or more cores on it and the package may have more than one chip. By placing more than a single core in the processor package, the operating system sees each core as an individual processor where it can assign process threads, and each core operates independently of the others. Thus, on a quad-core system, the computer can execute four instructions simultaneously – one on each core.

Connecting Cores with Hyperthreading

In 2002, Intel pushed out hyperthreading technology which makes the operating system "see" two logical processors for each processing core on the chip. It works by having two distinct sets of processor state data, one for each logical processor, and a single shared execution core. This allows the operating system to assign an execution thread to each logical processor which is maintaining its own state data. When one thread is blocked because it is waiting for data or another resource, the other logical processor can use the execution core for its processing, unless it too is in a wait state. Performance gains by using this technology tend to range from 15 to 30 percent.

This does not mean that a quad-core processor is twice as fast as a dual-core processor running with the same clock speed in a given situation or that an Intel processor with hyperthreading will perform better than one without the technology. Certain software factors can completely nullify the existence of additional processing cores.

Impact of Application Software

So, now that you have decided to get a new 64-bit Intel dual-core processor with hyperthreading and pair it up with a 64-bit version of Windows, you will get the best performance possible, right? Well, maybe.

While modern operating systems can take advantage of all the hardware has to offer, the application software you are using might not, especially legacy software.

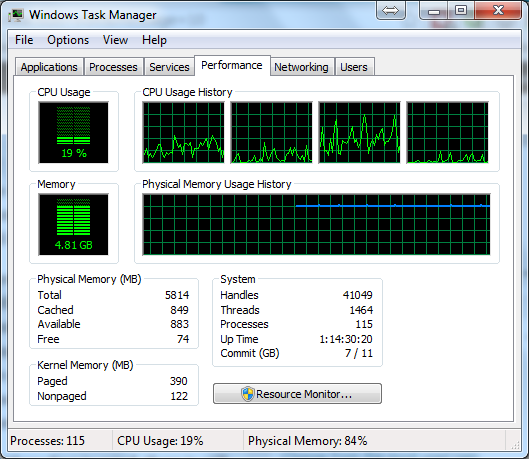

The older single-core CPUs I mentioned above could only handle processing a single thread at a time. Much of the programming done at that time was written to use just a single thread. Using that software on a multi-core system will still result in it using just one core for that thread. This is why you may see a quad-core system running at 25% load in the Task Manager with a single core at 100% utilization and the other three cores seemingly idle. The workload isn't spread out.

In order to take advantage of all of the processing cores in a system, the software must be designed with parallel processing and multithreading in mind. The idea here is to break the problem down into discrete components that can be completed independently of each other so the computer can complete each task on separate cores concurrently. This can reduce the time taken to generate the desired result. It also means that a thread for the user interface does not lock up when heavy processing of other threads is happening on other cores.

Even though a program has been designed with multithreading in mind, there is still the possibility that some of its functionality cannot be parallelized. One example of this is Microsoft Office applications that use Visual Basic for Applications (VBA) macros. A long-running macro is likely to consume an entire core until it terminates. Since a computer cannot automatically determine if a macro can be parallelized, it simply does not try to do it.

Improve Performance of Single-Threaded Apps

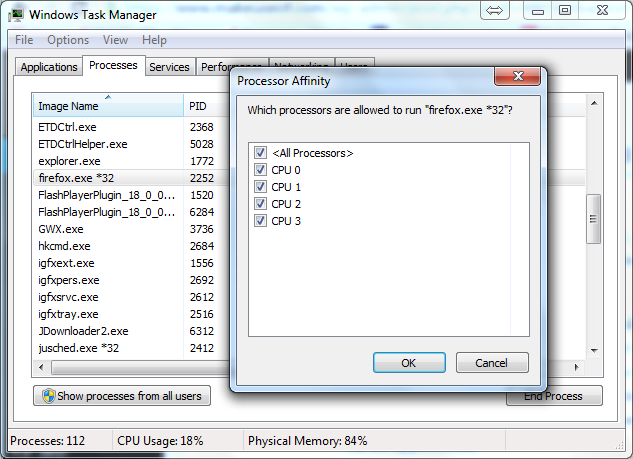

If you must use single-threaded legacy applications, especially if you need to run multiple ones simultaneously, your best bet for better performance is to set processor affinity for them. This will force them to only use specific processing cores. By doing this, you can ensure that even though they can consume an entire core's processing power, they won't be doing it on the same core, thus causing the processes to take longer to finish than absolutely necessary.

With Windows, you can set the affinity by opening Task Manager, right-click on the process name, select Set Affinity... from the context menu, clear the checkboxes for all processors you don't want it to use then clicking on OK.

You can also do this from the command line using the /affinity flag of the start command.

start /affinity 2 notepad.exe

Note that the processor number used with the affinity flag is 1-based while it is zero-based when looking at Task Manager so this would start Notepad on CPU 1.

A similar functionality exists for Linux users with the taskset command. It is part of the util-linux package and is part of the default installation of most distros. If it isn't currently on your system, you can install it with

sudo apt-get install util-linux

for Debian-based distributions or

sudo yum install util-linux

for Red Hat-based systems.

To use the command to start vlc on CPU 2, you would use

taskset -c 2 vlc

To change the affinity for a process that is already running with a process id (PID) of 9021 to use CPU 4 and 5, you would use

taskset -cp 4,5 9021

The other factor with the applications is the word length. While a 32-bit application can still store and manipulate both integer and floating-point 64-bit numbers, it must be done via "big math" libraries which take longer to perform the job than a 64-bit processor doing the same calculations natively. If an application requires the extended range and greater precision offered by 64-bit numbers, it will always be more efficient to use 64-bit applications for the task.

With some software, it doesn't matter too much whether it is 32-bit or 64-bit. A 32-bit web browser will work just fine for most people. With normal usage, it should not require scads of memory, even if you like to have dozens of tabs open. It will easily use multiple threads across your physical and logical processors and should not end up CPU-bound. The same applies to most word processing tasks. But if you will be photo or video editing, transcoding, running modeling software or doing other CPU-intensive tasks, or working with large data sets, you could wait longer than necessary without multithreaded 64-bit software.

The Final Take

So what is the best option? Best answer: it depends.

If you know you will be running an Excel spreadsheet on your system that uses long-running VBA macros, you would be better off with a dual-core system running at 3 GHz rather than a quad-core running at 2.2 GHz but if you are constantly bouncing between multiple multithreaded programs while doing your computer work or play, the reverse is true.

While generalizations never prove themselves out in all cases, the best desktop computing performance today will utilize 64-bit multi-core processors running a modern 64-bit operating system and 64-bit multithreaded applications for the most demanding tasks.

What kind of experiences have you had with 64-bit performance? Do you have 32-bit software that outperforms its 64-bit counterpart or is there no discernable difference between them? Let us know in the comments below.

Image Credit: Dismantling an old computer (CC by 2.0) by fdecomite, 4th Generation Intel® Core™ i7 Processor Front and Back (CC by 2.0) by Intel in Deutschland