Computer processors have advanced quite a bit in recent years. Transistors get smaller every year, and advancements are hitting a point where Moore's Law is becoming redundant.

When it comes to processors, it's not just the transistors and frequencies that count but also the cache.

You might have heard about cache memory when CPUs (Central Processing Units) are being discussed. However, we don't pay enough attention to these CPU cache memory numbers, nor are they the primary highlight of CPU advertisements.

So, exactly how important is CPU cache, and how does it work?

What Is CPU Cache Memory?

Put simply, a CPU memory cache is just a really fast type of memory. In the early days of computing, processor speed and memory speed were low. However, during the 1980s, processor speeds began to increase—rapidly. The system memory at the time (RAM) couldn't cope with or match the increasing CPU speeds, so a new type of ultra-fast memory was born: CPU cache memory.

Now, your computer has multiple types of memory inside it.

Primary storage, like a hard disk or SSD, stores the bulk of the data—the operating system and programs.

Next up, we have "random access memory," commonly known as RAM. This is much faster than the primary storage but is only a short-term storage medium. Your computer and its programs use RAM to store frequently accessed data, helping keep actions on your computer nice and fast.

Lastly, the CPU has even faster memory units within itself, known as the CPU memory cache.

Computer memory has a hierarchy based on its operational speed. The CPU cache stands at the top of this hierarchy, being the fastest. It is also the closest to where the central processing occurs, being a part of the CPU itself. According to Tech Target, "Cache memory operates between 10 to 100 times faster than RAM, requiring only a few nanoseconds to respond to a CPU request."

Computer memory also comes in different types, too.

Cache memory is a form of Static RAM (SRAM), while your regular system RAM is known as Dynamic RAM (DRAM). Static RAM can hold data without needing to be constantly refreshed, unlike DRAM, which makes SRAM ideal for cache memory.

How Does CPU Cache Work?

Programs and apps on your computer are designed as a set of instructions that the CPU interprets and runs. When you run a program, the instructions make their way from the primary storage (your hard drive) to the CPU. This is where the memory hierarchy comes into play.

The data first gets loaded up into the RAM and is then sent to the CPU. CPUs are capable of carrying out a gigantic number of instructions per second. To make full use of its power, the CPU needs access to super-fast memory, which is where the CPU cache comes in.

The memory controller takes the data from the RAM and sends it to the CPU cache. Depending on your CPU, the controller is found on the CPU or the Northbridge chipset on your motherboard.

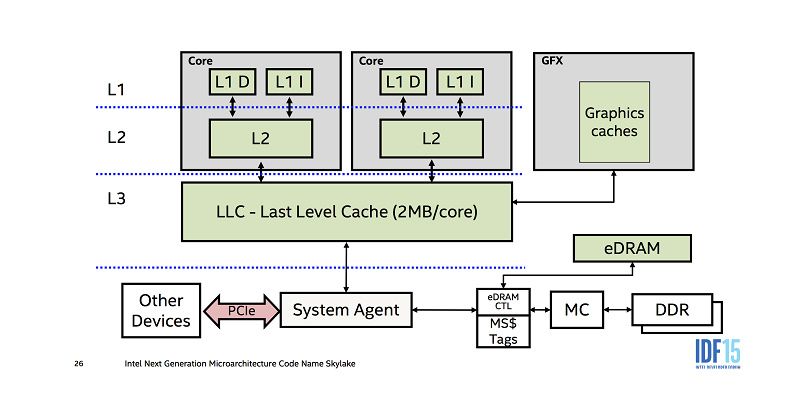

The memory cache then carries out the back and forth of data within the CPU. Memory hierarchy exists within the CPU cache, too.

The Levels of CPU Cache Memory: L1, L2, and L3

CPU Cache memory is divided into three "levels": L1, L2, and L3. The memory hierarchy is again according to the speed and, thus, the cache size.

So, does the CPU cache size make a difference to performance?

L1 Cache

L1 (Level 1) cache is the fastest memory that is present in a computer system. In terms of priority of access, the L1 cache has the data the CPU is most likely to need while completing a certain task.

The size of the L1 cache depends on the CPU. Some top-end consumer CPUs now feature a 1MB L1 cache, like the Intel i9-9980XE, but these cost a huge amount of money and are still few and far between. Some server chipsets, like Intel's Xeon range, also feature a 1-2MB L1 memory cache.

There is no "standard" L1 cache size, so you must check the CPU specs to determine the exact L1 memory cache size before purchasing.

The L1 cache is usually split into two sections: the instruction cache and the data cache. The instruction cache deals with the information about the operation that the CPU must perform, while the data cache holds the data on which the operation is to be performed.

L2 Cache

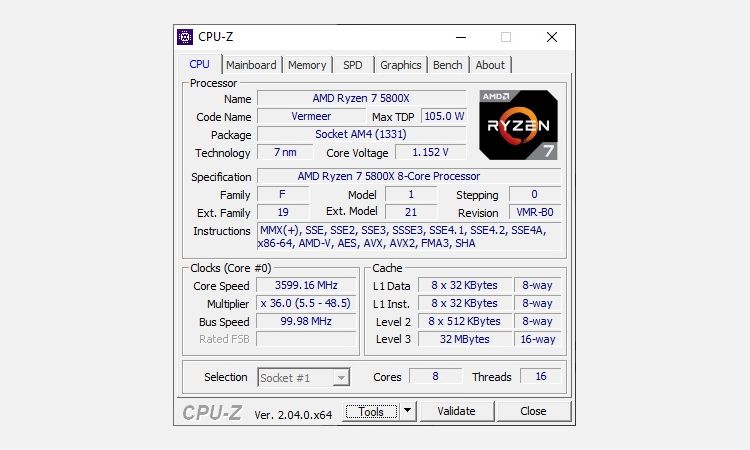

L2 (Level 2) cache is slower than the L1 cache but bigger in size. Where an L1 cache may measure in kilobytes, modern L2 memory caches measure in megabytes. For example, AMD's highly rated Ryzen 5 5600X has a 384KB L1 cache and a 3MB L2 cache (plus a 32MB L3 cache).

The L2 cache size varies depending on the CPU, but its size is typically between 256KB to 32MB. Most modern CPUs will pack more than a 256KB L2 cache, and this size is now considered small. Furthermore, some of the most powerful modern CPUs have a larger L2 memory cache, vastly exceeding 8MB. For example,

When it comes to speed, the L2 cache lags behind the L1 cache but is still much faster than your system RAM. The L1 memory cache is typically 100 times faster than your RAM, while the L2 cache is around 25 times faster.

L3 Cache

Onto the L3 (Level 3) cache. In the early days, the L3 memory cache was actually found on the motherboard. This was a very long time ago, back when most CPUs were just single-core processors. Now, the L3 cache in your CPU can be massive, with top-end consumer CPUs featuring L3 caches up to 32MB, while AMD's revolutionary Ryzen 7 5800X3D CPUs come with 96MB L3 cache. Some server CPU L3 caches can exceed this, featuring up to 128MB.

The L3 cache is the largest but also the slowest cache memory unit. Modern CPUs include the L3 cache on the CPU itself. But while the L1 and L2 cache exist for each core on the chip itself, the L3 cache is more akin to a general memory pool that the entire chip can make use of.

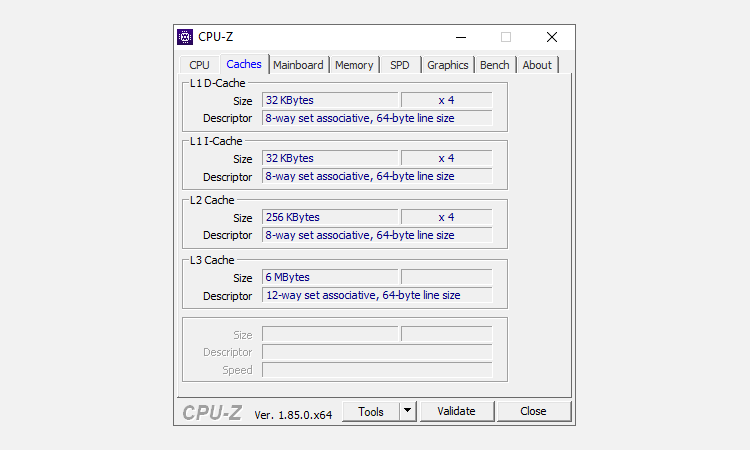

The following images show the CPU memory cache levels for an Intel Core i5-3570K CPU launched in 2012 and an AMD Ryzen 5800X CPU, launched eight years later, in 2020. The CPU cache data is in the bottom right corner of the second image.

Note how the L1 cache is split into two, while the L2 and L3 are bigger, respectively, on both CPUs? Yet on the AMD Ryzen 5800X, the L3 cache is more than five times larger than the Intel i5-3570K.

How Much CPU Cache Memory Do I Need?

It's a good question. More is better, as you might expect. The latest CPUs will naturally include more CPU cache memory than older generations, with potentially faster cache memory, too. One thing you can do is learn how to compare CPUs effectively. There is a lot of information out there, and learning how to compare and contrast different CPUs can help you make the right purchasing decision.

Cache memory design is always evolving, especially as memory gets cheaper, faster, and denser. For example, one of AMD's most recent innovations is Smart Access Memory and the Infinity Cache, both of which increase performance.

How Does Data Move Between CPU Memory Caches?

The big question: how does CPU cache memory work?

In its most basic terms, the data flows from the RAM to the L3 cache, then the L2, and finally, L1. When the processor is looking for data to carry out an operation, it first tries to find it in the L1 cache. If the CPU finds it, the condition is called a cache hit. It then proceeds to find it in L2 and then L3.

If the CPU doesn't find the data in any of the memory caches, it attempts to access it from your system memory (RAM). When that happens, it is known as a cache miss.

Now, as we know, the cache is designed to speed up the back and forth of information between the main memory and the CPU. The time needed to access data from memory is called "latency."

L1 cache memory has the lowest latency, being the fastest and closest to the core, and L3 has the highest. Memory cache latency increases when there is a cache miss as the CPU has to retrieve the data from the system memory.

Latency continues to decrease as computers become faster and more efficient. Low-latency DDR4 and DDR5 RAM and super-fast SSDs cut down latency, making your entire system faster than ever. In that, the speed of your system memory is also important.

CPU Cache Speed Explained

CPU cache size and speed are important to the overall operation of your computer. As with most issues relating to computer hardware, more is better, and faster is always the smart choice.

However, you shouldn't let CPU cache become the ultimate deciding option when it comes to buying a new CPU. Sure, more and faster is better, but you also need to consider other important CPU performance factors such as the number of cores, CPU clock speed, and so on.