What happened when the Internet gets too big for the Internet? The 12th of August saw widespread disruption to Internet users worldwide, as multiple Internet routers fell victim to the a serious problem with how Internet traffic is managed, on a day which has became known as '512K Day'.

Affected users saw drastically increased ping times, with many websites failing to load altogether.

The issue - which had been predicted for a long time - was due to the table used for managing how to reach certain IPv4 addresses exceeding their limit of 512,000 routes. This resulted in the older routers that are still used by major ISPs to experience memory overflows and crashes, with users subsequently facing downtime and performance issues as a result.

Affected ISPs - which include BT, Comcast, AT&T, Sprint and Verizon - all reported serious performance issues for some part of Tuesday, with some Web hosting companies being knocked offline altogether.

Curious about how finer details of what went down on '512K Day'? Read on for more information.

Border Gateway Protocol and You

When you visit any website, you tend to type in a domain name. These are human-understandable addresses that allow you to access a website without having to manually type an IP address into your Web browser. From there, your computer transforms it into a numeric - or alpha-numeric, in the case of the latest generation of IP addressing - IP address, which is almost like the phone number of the website you want to visit.

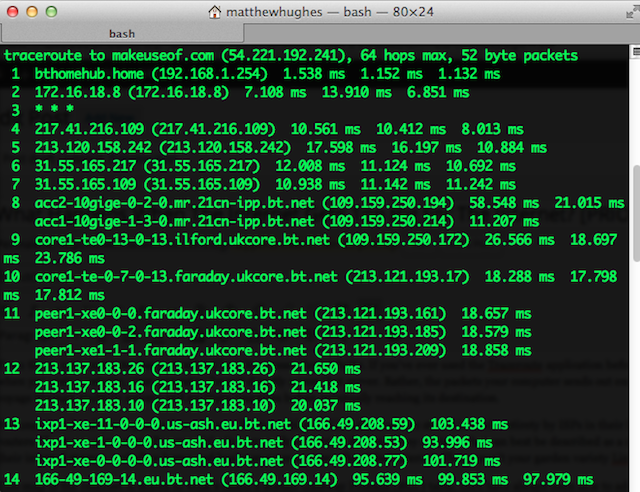

From there, your computer has to work out how it accesses that website. If you've ever used the Traceroute application before, you'll know that when you visit a website, your computer doesn't directly access that server. Rather, the packets your computer sends out embark upon an unusual voyage through multiple servers and multiple countries before eventually reaching its destination.

Fortunately, a lot of this is planned in advance. Routes to blocks of IP addresses are stored in their entirety by ISPs in their high-performance routers. These are phenomenally powerful, phenomenally expensive devices. They contain what can best be described as a map of the Internet on their internal storage, and allow home and business users to access the global Internet. These aren't your garden variety Linksys boxes.

This map of the Internet is stored in what's called a Border Gateway Protocol (BGP) table. ISPs have always been able to add new routes to the BGP table, which is then shared globally throughout all ISPs. Whenever a new route is added, the shared routing table is automatically updated to reflect that change. This also means that when one party with access to the BGP table makes a mistake, it affects every user.

Perhaps the most notorious example of this was back in 2008, when Pakistan Telecom blocked YouTube in response to a court order. They made a miscalculation with respect to how they were going to block the site, and ended up making a change to the BGP table which propagated worldwide, and ended up blocking it for everyone.

The routers that are used to host the BGP tables have storage space specifically allocated for this purpose. It is measured in terms of routes, with the default limit being artificially established at 512,000 routes for IPv4 addresses, with an additional 512,000 routes for IPv6 addresses. Although many have predicted the increase of the BGP table exceeding 512K routes for years, we've never quite came close to exceeding this limit. The size allocated was more than sufficient. And then suddenly, it wasn't.

So, what happened?

A few things, really. The first - and most glaringly obvious problem - was with the ISPs themselves. Years of underinvestment had resulted in many running woefully outdated routers. These machines are supposed to be able to handle the traffic of millions of users, and yet found themselves totally unprepared for a much-predicted milestone in the size of the BGP table.

Another issue was with the type of address we use to uniquely identify servers on the Internet. Until recently, we've almost exclusively used IPv4 addresses, of which there is a finite amount available. The exhaustion of this pool has been looming over us for years, and we've found a number of graceless responses to this problem.

One of the techniques used to mitigate against a shortfall of these addresses was created by the Internet Engineering Task Force (IETF). They aggressively used a technique called Classless Inter-Domain Routing (CIDR), which effectively 'subnetted' the IP addressing system, and more efficiently distributed the number of IP addresses available. This helped mitigate the exhaustion of these IP addresses, but this came with other unintended consequences. Namely, the BGP table fragmented, and swollen into a unmanageable amount, bringing 512K day ever closer.

And then, we have to accept that the Internet has been a victim of its own success. More users, more websites and more ISPs have resulted in more routes to map. More routes to map means a larger BGP table. A larger BGP table means... Well, you get the idea.

What's been done?

To the credit of the ISPs, they resolved the issue phenomenally quickly. In the interim, some effective (albeit ugly) routes were created to ensure the shortest downtime. Artificial limits on the BGP routing table were swiftly increased, and older hardware that physically cannot handle the increased routing table size will be decommissioned, and replaced with newer hardware.

Fingers crossed, we might not have to face another '512K Day' for a long, long while.

Were you impacted by the disruption?