Many functions that used to be a separate part of a computer have been integrated over the last decade as both motherboards and processors become smaller and more efficient. There are many modern desktops that ship without a single PCI card.

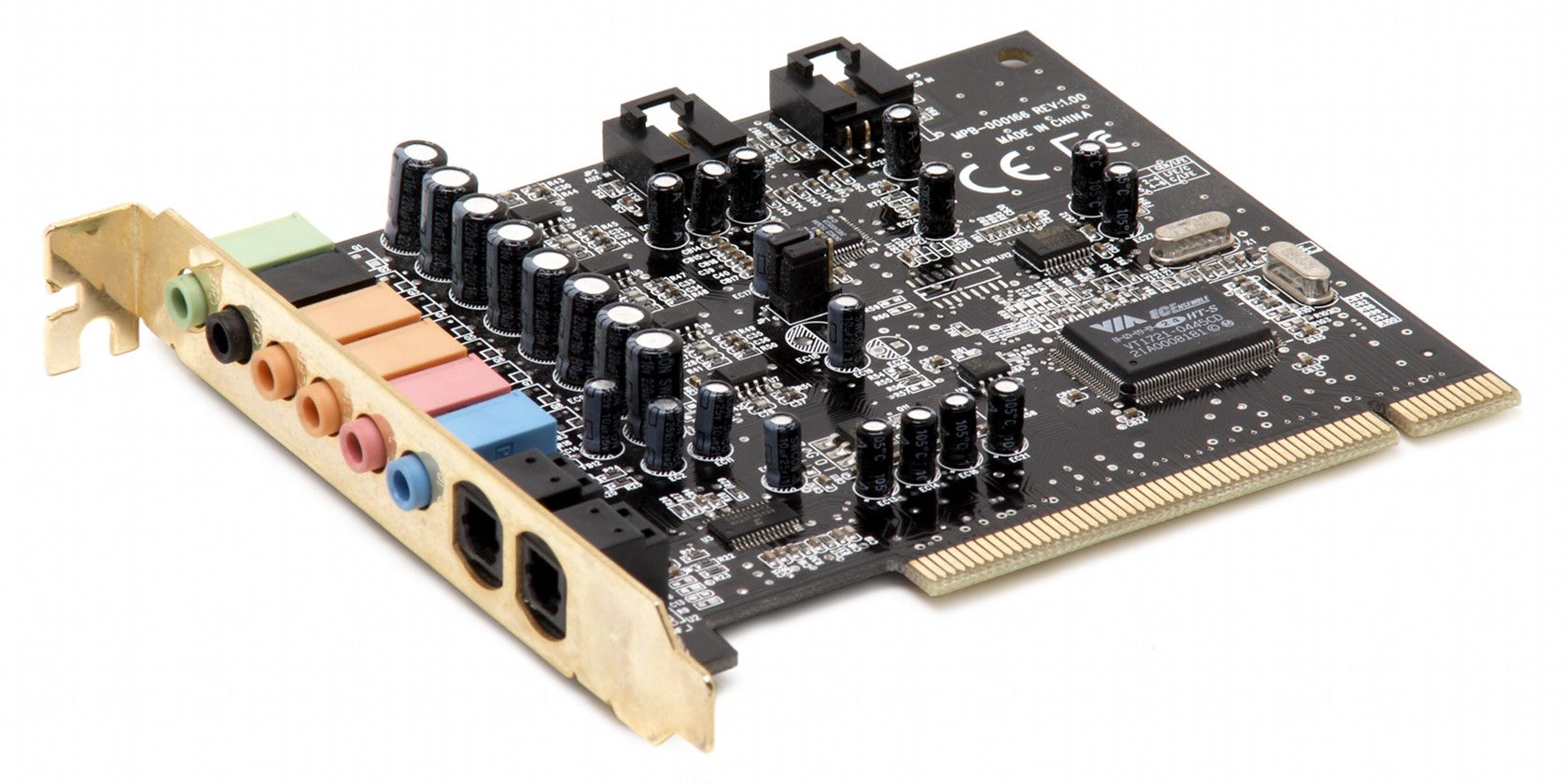

Sound cards are among the formerly discrete components that are now a part of the average PC’s motherboard. That has damaged the market for sound cards, of course, but there’s still a niche of high-end cards that promise better sound quality relative to integrated alternatives. Is there any truth to this claim, or is it expensive snake oil?

What Does A Sound Card Do?

The function of a sound card is obvious; to produce sound. What’s less obvious is why hardware is needed for the task. Audio seems simple, after all; why worry about hardware processing at all?

It is true that audio is not as demanding as video, as there’s simply less information involved, but that doesn't mean the task is entirely trivial. Audio can consume some processor cycles, so off-loading it to a dedicated chip is preferable. Most motherboards now have a chip on-board, but those used on a dedicated sound card are typically more robust. A more advanced audio chip can also include hardware that enables features like virtual surround sound, a pre-amp, or niche audio formats.

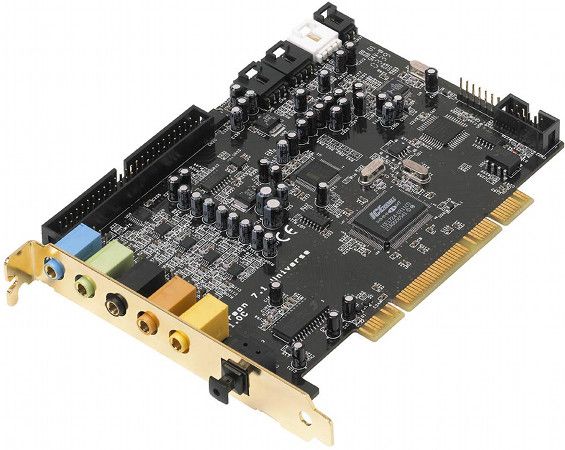

Sound cards are also responsible for expanding audio output. Almost all motherboards, no matter the on-board audio used, offer no better than 5.1 audio via standard 3.5mm jacks. Some don’t even manage that. Software options are often limited, too, leaving the user with few ways to tailor audio output to personal tastes. A sound card is usually required to make a PC compatible with 7.1 audio and common home theater outputs like S/PDIF.

Does A Sound Card Really Produce Better Audio?

Judging audio quality is a tricky subject because, well, it’s mostly subjective. Standards exist, but reviewers generally don’t have the laboratory-grade equipment used by manufacturers to calibrate premium audio hardware. Discovering the difference usually requires blind comparison tests.

Fortunately, there’s still one site that does this: The Tech Report. They’ve conducted several discrete sound card reviews over the last few years, the latest of which covered the low-cost ASUS Xonar cards. They use a combination of testing hardware and blind listening tests to determine the quality, and have consistently found that discrete cards are preferable to integrated audio.

With that said, though, a difference in audio quality can be hard to notice in games. There are two reasons for this. First, games often focus on visuals rather than sound, which means players usually can’t concentrate on the audio. Second, games don’t always have high-quality source audio, which makes better hardware pointless.

Improved surround sound is the one benefit games can sometimes expect to receive. Certain games will have surround-sound modes that only work with hardware audio, and some audio cards have virtual surround sound modes designed to improve the quality of source content. Both can lead to a more immersive experience.

Buyers should remember their headphones or speakers impact quality, too. If you only own a $100 2.1 audio system, a sound card probably won’t be worthwhile, as your audio system won’t be able to produce a noticeable difference.

What About Performance?

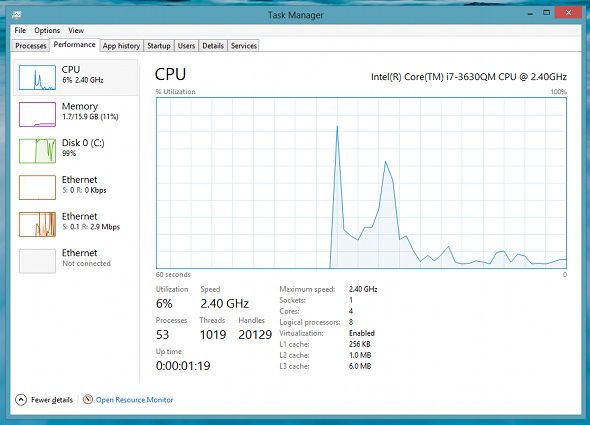

As mentioned earlier, audio hardware can reduce processor load by off-loading the task from the CPU. This can improve performance, but does it matter enough to be noticeable in games?

No, not really. Motherboard audio handles the task well enough, and today’s games generally aren’t bound by processor performance, so they won’t run more quickly if a sound card is installed. There’s also not much difference in latency (the time it takes for audio to reach your speakers). Discrete cards are often slower in this regard because they apply additional processing that an integrated chip doesn’t offer, but the difference is too small to notice.

So, Should A PC Gamer Buy A Sound Card?

If audio quality in games is your sole concern the answer is a definitive no. Any difference will be hard to notice, and some titles don’t output audio that’s good enough to make the hardware matter. Games also tend to focus on visuals, so there are few audio sequences that last long enough for the player to appreciate. You'll be better off spending your money on some other hardcore gaming peripheral.

There’s one feature that can make a sound card sensible; surround sound. Not all integrated audio chips handle it well, which may lead to sound that seems flat or poorly staged, even with a headset that’s designed to provide great surround. In this case you’ll need a sound card to receive the best results.

Sound cards are most important to users who often watch movies or listen to music. In these situations a difference in quality is easier to notice, and the quality of the source is often quite good, even excellent, so better hardware will shine. These users might also want to hook up a premium 7.1 system or a larger subwoofer, hardware most motherboard audio can’t support.

Image Credit: Evan-Amos/Wikipedia