In the world of data storage, there have been numerous breakthroughs, and even more flops that went absolutely nowhere. For every successful piece of data storage technology, there have been dozens more that were laughably bad.

Let's take a look at some of the technologies that shaped modern data storage, as well as where we go from here.

Historical Data Storage Timeline

Data storage formats come and go, but the one consistent factor is Moore's law, which is the observation that over the history of computing, technology shrinks, and power doubles approximately every two years. While the original law was merely meant to speak to the ability to shove roughly twice as many transistors into an integrated circuit, the law has since been unofficially expanded to apply to technology as a whole, and its ability to (roughly) double computing power every two years.

While we're getting to a stage that's close to "Peak Moore's Law" in that we're not necessarily doubling computing power nearly as fast as we were a decade or two ago, the effect still holds true to the extent that every two years we seem to barrel through a wall we previously thought impassable, or at least currently impassable.

You can see just how applicable the law is when you start to line up technologies side-by-side and realize just how far they've progressed in the form of data storage.

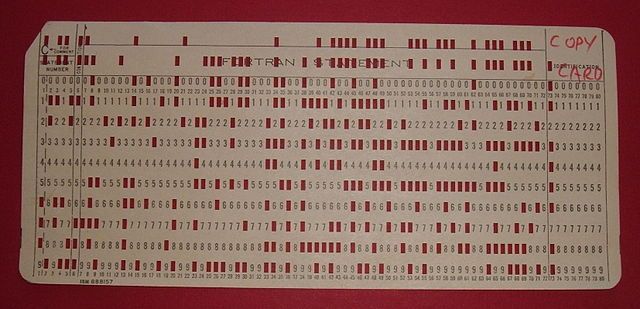

Punch Cards (or Punched Cards) and Paper Tape (1700s)

Punch cards feature heavy card stock along with a rudimentary grid pattern. Along this pattern, specific slots are "punched" out, which allows for easy scanning (by a computer or card reader) for data-heavy projects and tasks.

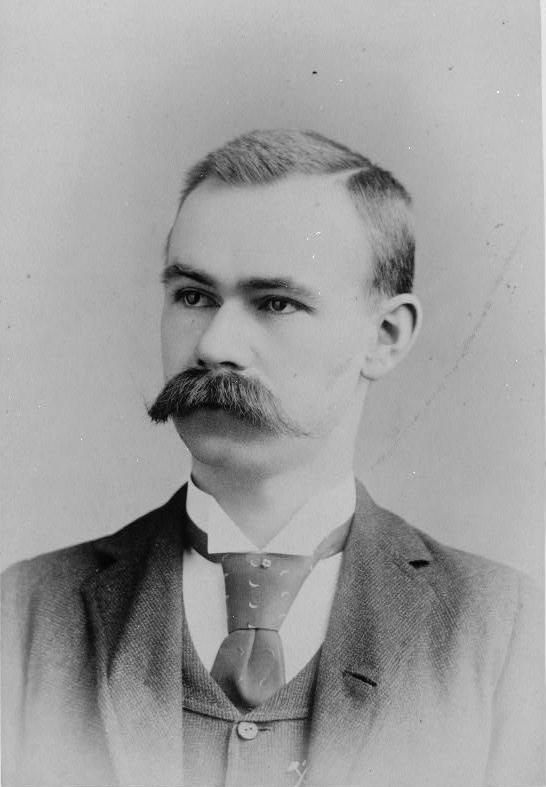

While the punch card was first thought to have been invented in the 1700s by Jean-Baptise Falcon and Basile Bouchon as a way to control textile looms in 18th century France; modern punch cards (used for data storage) were masterminded by Herman Hollerith as a way to process census data for the upcoming 1890 United States Census.

In 1881, Hollerith - after spotting inefficiencies in the 1880 census - began work on a way to rapidly improve the speed of processing enormous amounts of data. Calculating data into usable numbers after the 1880 census took nearly eight years, and the 1890 census was estimated to take 13 years to count, due to an influx of immigrants after the last census. The idea of not having tabulated data for the previous census while they were recording the current census led the United States government to assign the Census Bureau, and Hollerith (a Census Bureau employee at the time) in particular, to find a more efficient means in which to count and record this data.

After experimenting with two similar technologies: punch cards and paper tape (similar to the punch card, but connected for easier feeding), he ultimately decided to explore the punch card after discovering the paper tape - although easier to feed through a machine quickly - was very easy to tear which led to inaccuracies in data recording.

Hollerith's method was a rousing success, and after utilizing the punch card method, the 1890 census had a full count, and data graph after just one year. After his success with the 1890 census, Hollerith formed a company called Tabulating Machine Company, which was later part of a four company consolidation into one new company, known as Computing Tabulating Recording Company (CTR). Later, CTR was renamed and is now known as International Business Machines Corporation, or, IBM.

Punch cards saw improvements in technology up until the mid–60s before they started to be phased out by modern computers that were getting cheaper, faster and more economical than utilizing punch card technology. While almost entirely phased out by the 70s, punch cards were still used for a variety of tasks, including data recorders for voting machines as recently as the 2012 election.

Paper tape, on the other hand, started to show some real promise. While punch cards were still the dominant technology of the time, paper tape was used for applications in which it was better suited, and improved upon over the years until it ultimately formed the foundation for a new technology, magnetic tape.

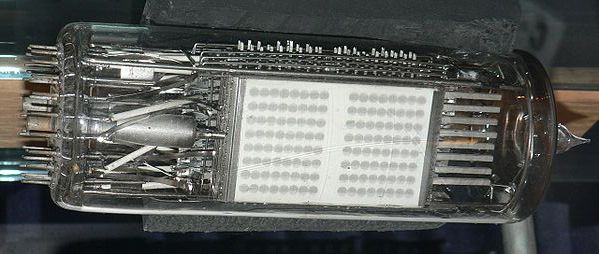

Tube Storage (1946)

When it comes to tube storage, there were only two main players: Williams-Kilburn and Selectron. Both machines were known as random access computer memory and used electrostatic cathode-ray display tubes in order to store data.

The two technologies varied slightly, but the simplest implementation used what was known as the holding beam concept. A holding beam uses three electron guns (for writing, reading and maintaining the pattern) in order to create subtle voltage variations in which to store an image (not a photo). To read the data, operators used a reading gun that scanned the storage area looking for variations in the set voltage. These changes in voltage are how the message was deciphered.

The first of these tubes was the Selectron tube, which was developed for the first time in 1946 by Radio Corporation of America (RCA) and had an initial planned production run of 200 pieces. Problems with this first series led to a delay which saw 1948 pass by while RCA still didn't have a viable product to sell to their primary customer, John von Neumann. Von Neumann intended to use the Selectron tube for his IAS machine, which was the first fully electronic computer built at the Institute for Advanced Study, in Princeton, New Jersey. The primary appeal for von Neumann when selecting the RCA tube rather than the Williams-Kilburn model was due to the original Selectron's boasted memory storage of 4096 bits as opposed to Williams-Kilburn and their 1024 bit capacity.

Eventually, John von Neumann switched to the Williams-Kilburn model for his IAS machine after numerous production issues had caused RCA to give up on the 4096 bit concept and instead switch to the rather disappointing 256 bit version. While still used in a number of IAS-related machines, the technology was ultimately abandoned by the 50s, as magnetic-core memory became more popular, and cheaper to produce.

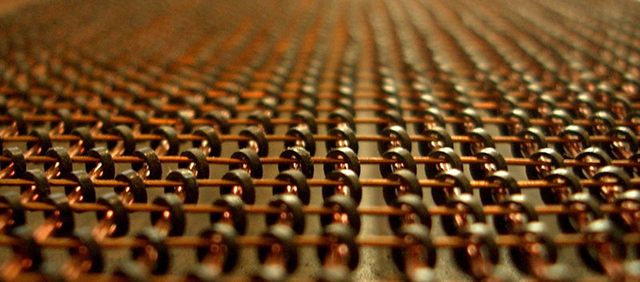

Magnetic-core Memory (1947)

Often referred to as "core" memory, magnetic-core technology became the gold standard of storage technology and had an impressive run of approximately 20 years as the dominant technology in computing during that era - most notably by IBM.

Core memory uses magnets in order to create a grid with each intersection of the X and Y axis being an independent location responsible for storing information. Once connected to an electrical current, these gridded sections turn clockwise or counterclockwise in order to store a 0 or a 1. To read the data, the process works in reverse, and if the grid location is unaffected, the bit is read as a 0. If the grid shifts to the opposite polarity it's read as a 1.

Core was the first popular type of memory available in consumer devices that used random-access technology, which we now know as RAM. At the time, random-access memory was a real game changer as the technology allowed the user to access any memory location in the same amount of time. This technology was later advanced by the introduction of semiconductor memory, which led to the RAM chips we use in our devices today.

Magnetic-core memory was first patented in 1947 by amateur inventor Frederick Viehe. Additional patents filed by Harvard physicist An Wang (1949), RCA's Jan Rajchman (1950) and MIT's Jay Forrester (1951) for similar technology make the waters a bit cloudy when trying to determine who the actual inventor was. All of the patents were slightly different, but each were filed within just a few years of one another. In 1964, after years of legal battles, IBM paid MIT $13 million for the rights to use Forrester's 1951 patent. At the time, that was the largest patent-related settlement to date. They had also previously paid $500 thousand for the use of Wang's patent after a series of lawsuits due to the patent not being granted until 5 years after filing, a period of time in which Wang argued that left his intellectual property exposed to competitors.

Magnetic-core memory works by representing one bit of information on each core. The cores were then magnetized either clockwise or counterclockwise, thus allowing each bit to be stored and retrieved independently by arranging the wires around the board in a way that allowed the core to be set to either a one, or a zero depending on magnetic polarity. When the electrical current powering the board was altered, it made it possible to alter the way in which the 1's and 0's were stored and retrieved.

While the technology mostly died in the 70s it brought about the foundation of modern computing and random-access memory solutions - specifically, internal memory solutions.

Compact Cassette (1963)

The compact cassette uses magnetic tape wrapped around two spools which are sheltered inside of a hard plastic container. As these spools spin, specialized recorders write data by manipulating the magnetic coding into triangular or circular patterns on the surface of the tape. When played through a tape player, two heads advance the tape at a standard speed (1.875-inches per second) and an electromagnet reads the variations in the tape data in order to create sound.

Much like magnetic-core memory, the compact cassette is also a magnetized storage solution. However, aside from both being magnetic, they differ in almost every other possible way. For one, the compact cassette doesn't utilize random access memory technology. Instead, compact cassettes - or just cassette tapes, as they're commonly known - utilize sequential memory. This means the information is stored in sequence, and it takes longer to access individual pieces depending on where they are located on the tape.

The compact cassette improved on another technology - the magnetic tape - which was used in the 1950s for audio and film recording (based on paper tape technology) and is still used today in some instances of music or film recording. The major improvements to the magnetic tape brought the size down significantly, making it more easily transportable, and more viable in consumer devices.

While the first compact audio cassette was introduced by Phillips in 1963, it took over a decade for the format to gather any real steam. In 1979, with Sony's introduction of the Walkman, the format rose to immense popularity and stayed there for well over a decade until the CD started to come into its own in the early to mid–90s.

It's important to note that the technology behind magnetic tape, and the cassette in particular, were also responsible for another storage medium that started to gain widespread consumer acceptance around this timeframe - the VHS cassette. While magnetic tape - or cassettes - are only used in specialized and very niche applications, they did pave the way for more portable, faster, and higher quality data storage mediums.

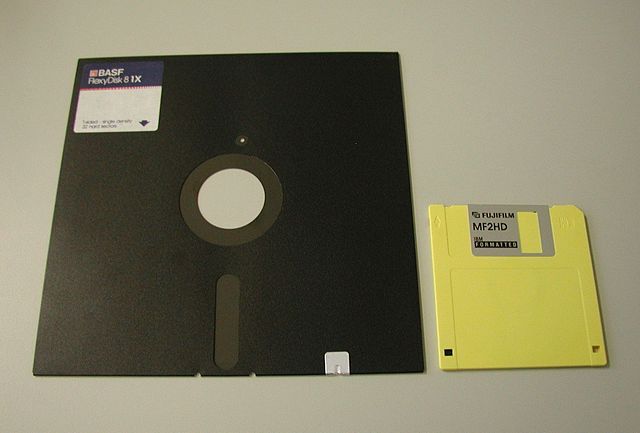

The Floppy Disk (1960s)

Much like the cassette tape, the floppy disk uses surface manipulation of the inner magnetic disc in order to record data. When placed into a disk drive, an electromagnet looks for variations on the surface of the disk in order to recover the information contained within it.

The first floppy disks were just as their name implies, floppy. The disk itself was a piece of thin and flexible plastic designed to hold a magnetic material inside. Initially, these disks were 8-inches, before the 5 1/4-inch versions were released and then both gave way to the much smaller - and not so floppy - hard plastic 3 1/2-inch diskette (also called a floppy disk).

The earliest versions of the technology started to surface in the late 1960s before becoming a computing mainstay in the early 70s. Floppy disks relied on a FDD (floppy disk drive) in order to read the data stored on the magnetic interior of the disk. For more than two decades, the floppy disk was used as the primary readable and writable storage device for personal computers.

While limitations on the technology started to become more apparent in the early 90s, disks were still widely used - in conjunction with compact disc drives - to provide an additional layer of support in instances in which backups or data storage were required. Even though CD technology was entering the market, and bridge technology, such as the ZIP drive was relatively common, the technology to write to a CD was still a few years off for consumers (and quite expensive). This led to personal computers being built and shipped with floppy disk drives long after they had outlived their usefulness.

In 1998, Apple introduced the iMac, which was the first commercial success in the personal computing market that didn't include a floppy disk drive. Despite the iMac's success, the floppy disk drive didn't disappear completely from consumer-grade personal computers until 2002.

LaserDisc (1978)

Although it looks similar to a DVD or CD (albeit quite a bit larger) the LaserDisc (LD) was actually quite different. LD stored audio and video in the pits and lands (grooves) on the surface of the disc through a process called pulse-width modification. Playback was accomplished through an LD player using a helium-neon laser tube in which to retrieve and decode the stored information.

LaserDisc was a short-lived format that was never all that well-received by anyone but the most hardcore videophiles. However, it's an important inclusion due to the groundwork it laid for more popular optical disc formats such as CDs, DVDs, and later Blu-ray. It is important to note, however, that LaserDisc, although similar to the aforementioned technologies, wasn't digital technology. That said, it certainly offered the best analog picture and sound to quality to date.

The format itself was only used for storing audio and video, although it did have practical applications that could have - if utilized - extended to computing and other data storage mediums. While VHS and Betamax video cassettes were slugging it out for market share in the 80s, LaserDisc quietly emerged in 1978 without much fanfare.

Although rather cumbersome in size, LD offered audio and video quality that was unmatched at the time. It was the first format of its kind that allowed users to pause images or use slow motion functions without noticeable losses in video quality. Laserdisc wasn't without its faults, however. One major drawback was having to flip the massive disc every 30 or 60 minutes (depending on the type of disc) before the even pricier players that rotated the optical pickup to the other side of the disc became popular.

If it hadn't been for the bulky and expensive players, as well as the cost of the disc itself, LD could have been a quite popular format for audio and video storage.

The format did gain slight acceptance in Japan, with approximately 10-percent of all Japanese households owning a Laserdisc player (compared to 2-percent in the US), but by the early 2000s the format was mostly dead as the smaller - and cheaper - DVD started to gain popularity.

Modern Data Storage

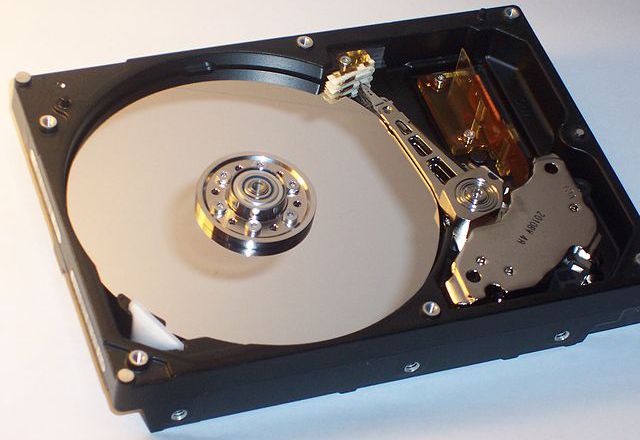

Hard Disk Drive | HDD (1980s)

The HDD records data on a thin ferromagnetic material on the surface of a spinning platter. Data is by written by rapidly changing sequential binary bits to the surface of the platter. The data is then read from the disk by detecting these transitions in the surface magnetization in the form of 1s and 0s.

Introduced by IBM in 1956, HDDs started as devices that were about the size of a washing machine, with less storage than three 3.5-inch floppies (3.75 Megabytes of total storage vs. 4.32 Megabytes on the three floppy disks). Needless to say, it wasn't really a viable option for most practical purposes, and in the sense of modern computing we didn't begin to see the HDD in consumer-grade computers until the late 1980s. While the technology was small enough to fit in modern computers by the early 80s, the cost was still prohibitive for most consumers.

The drives themselves work by using a flat cylindrical device that looks a lot like a CD. The device - called a "platter" - holds recorded data by writing to the disk using sequential changes in the direction of magnetization in order to store data as binary bits on a thin layer of ferromagnetic material covering the outside of the platter.

These bits are read by spinning the platter and reading the transitions in the magnetization in order to form a clear picture, in binary, of what is stored on the drive. HDDs are another example of random-access memory, as they are capable of recalling data written anywhere on the strip of ferromagnetic material (on top of the platter) in about the same amount of time no matter where they are located.

Over the years, technology improved allowing the platter to spin faster, thus reading and writing information more quickly. Initial consumer HDDs offered a speed of 1,200 RPMs, while standard speeds on modern HDDs are typically 5,400 or 7,200 RPM. Hard disk drives can spin at up to 15,000 RPMs on the most high-performance servers although this is still rather rare.

Modern drives are moving away from platter-based technology in favor of flash memory. Flash memory - or SSD (solid state drive) are faster, more reliable than a traditional HDD, and consume less power. That said, HDDs still dominate the market due to a lower price point.

Compact Disc (1979)

CDs use a similar technology as LaserDisc, only in a digital format. Much like LD, information is stored within the pits and lands of a disc. Instead of analog data, this data is written in a series of 1s and 0s. To read the data within the pits and lands of the disc, a laser reads the coded information by measuring the size and distance between the bits.

The term "compact disc" (or CD) was coined by Phillips and worked in conjunction with Sony to deliver a format that could ultimately replace the cassette tape as the next generation of audio storage and playback technology in 1979. The format became an international standard in 1987 although consumer use of the CD wasn't popular until the early 1990s. CDs quickly moved past audio-only storage and were later adapted to store data (CD-ROM), as well as video, images, or even entire computer or console games through a wide variety of disc types.

By the mid–90s the CD was the most popular data storage means in the world, and by 2000 it had surpassed the cassette tape as the most popular method of storing audio files. As consumers adopted the technology, the format quickly moved past audio-only storage and was later adapted to store data (CD-ROM), as well as video, images, or even entire computer or console games.

Also of note, this is one of the first modern technologies since the audiocassette that allowed users not only read access but the ability to write to the disc with relatively inexpensive and consumer-targeted writeable drives.

While CDs aren't widely used for data storage, games, or video due to advances in flash memory, hard drives, and better optical formats such as DVD and Blu-ray; it is still quite popular as a storage solution for music and is number two to the MP3 in terms of total usage for this purpose.

DVD and Blu-ray

DVD and Blu-ray use the same sort of technology as a CD with the notable difference being in the amount of storage a disc contains. In addition, the recovery method differs slightly as each of the two technologies uses a different laser in order to read the information contained on the disc.

DVD - or digital versatile disc - is another optical technology much like LaserDisc or CD. Although similar in appearance, CDs and DVDs vary in the amount of storage space contained on each. While the CD can hold a mere 700 MB of data, DVDs on the other hand could hold up to 4.7 GB on a standard disc, and 17.08 GB of data on a dual-layer, dual-sided disc.

The DVD wasn't made as a technology to replace CDs, but instead to hold larger amounts of data in addition to being a standardized format for video. CDs on the other hand, were envisioned as mainly a data or audio storage medium. While the conversation could stop there, due to both types of discs being able to handle audio, video, and other types of data storage, the DVD is in fact the better choice for video due to adoption by Phillips, Sony, Toshiba and Panasonic in 1995 due to its larger storage size which allowed higher quality audio and video for film playback.

The DVD is still in use, but its usefulness for data storage has been stripped away due to flash storage, such as high capacity SD cards or flash drives.

Movies, on the other hand, are still made on DVD, although Blu-ray is the current standard. DVDs have a max resolution of 480i, while Blu-ray features crystal clear 1080p (what do these numbers mean?), which - combined with the decreasing cost of Blu-ray players - has led people to the newer format. That said, in 2014, DVD movies still outsold those on Blu-ray, so it seems DVD isn't quite dead... yet.

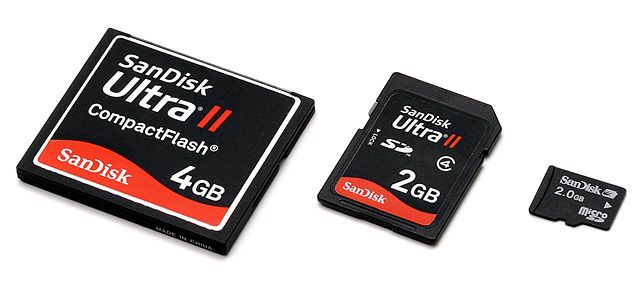

SSD and Removable Flash Storage

The SSD (solid state drive) is the heir apparent to the standard HDD due to faster read and write times, improved reliability, and more energy efficient due to the absence of a platter spinning at 5400 or 7200 RPM. SSD is actually a rather old technology that has roots in the previously discussed section on RAM and magnetic-core memory. Originally, SSDs were RAM-based, which meant it didn't require moving parts like an HDD in order to operate. The one significant drawback to RAM-based SSDs, however, was its volatile nature that required a constant power source in order to prevent data loss.

Current SSDs aren't reliant on RAM-based technology; instead, they use the more modern Flash storage.

Removable Flash storage devices - essentially the portable version of the SSD - are also quite popular. These devices use Flash technology in order to store data on SD cards or USB drives, which make them the smallest, fastest and most portable storage medium to date. Modern removable flash storage devices can hold up to 512 GB which means they're not only portable, they're powerhouses which are beginning to replace physical hard drives in some computers and devices.

The Move to Replace Physical Storage

As data storage technology and worldwide connectivity continue to improve the next generation of data storage is likely going to be improvements to technology we already have, before ditching physical storage altogether - for the most part. The chances of all forms of physical storage disappearing are slim to none, but the future of data storage for consumer technologies is markedly less physical.

Blu-ray - although still the best in class for movies - might just be the best example of this shift away from physical storage as the decade-old format has yet to win the war with its predecessor - the DVD. A number of factors contribute to the fact that DVDs still outsell Blu-ray worldwide and upon closer inspection these factors tell us most of what we already know about the future of data storage.

DVDs are not the biggest Blu-ray competitor. The reason DVDs are still outselling Blu-ray obviously aren't technology-related, the cost of a Blu-ray disc or player aren't prohibitive, and there is no shortage of titles available. The real reason DVDs are still outselling Blu-ray discs is due to a split interest in the consumer market.

In past generations, such as DVD vs VHS, one technology just had to be better, and not too far out of line in pricing with the other. Blu-ray, on the other hand, has to compete with not only DVD, but streaming technology which is not quite a format war, but does lead to some fragmentation of the HD video market.

This alone is why DVD is still the most dominant physical video format. If you figure streaming rentals, sales and Blu-ray purchases, the next-gen technologies outsell DVDs by a wide margin. The problem, it seems, is market fragmentation as Blu-ray competes not just with DVD, but with its (possibly) next-generation competitor, streaming online video.

Streaming Media

The biggest competitor for CD, DVD and Blu-ray is streaming media. With Netflix, Hulu, Amazon Instant Video, iTunes, and dozens more, the world is full of options for music and high definition video.

With the convenience, and relative cost-effectiveness of streaming newly-released, as well as classic and hard-to-find movies, music and more, the future of data storage for entertainment is decidedly virtual.

For anyone that doubts the viability of streaming media and its ability to take down physical formats, look no further than large video chains - like Blockbuster - or even newer and more innovative technologies such as rental kiosks, or even Netflix. Netflix and their DVD via mail service offering started the wheels in motion for disruption in a video rental industry that remained relatively unchanged for decades. Now, although it's still offered in some parts of the world, Netflix is slowly backing away from their DVD mailing efforts in exchange for cheap, on-demand content that you can stream from a number of popular consumer devices.

Cloud-based Technology

While streaming media is set to disrupt physical data storage formats such as the CD, DVD and Blu-ray disc, cloud-based technology aims to provide the same sort of treatment for physical HDDs, SSDs, and removable flash media, such as SD cards and USB drives.

To put it into perspective, hard drive technology is getting cheaper and storage capacity is improving, yet laptop and desktop computers are all trending downward in the amount of storage space they come equipped with. While these are all easily upgradeable, the move toward smaller internal storage is largely due to the expanding use of cloud-based technologies in order to store data, files, photos, videos, and more.

While the chances that we'll completely do away with any sort of internal memory is rather slim - as we still need internal memory to run our operating systems - the days of limited internal memory in devices is already upon us, and we'll continue to see this effect compound as connection speeds get faster and worldwide connectivity to the web continues to grow.

The biggest concern with widespread adoption of cloud-based technology is still security. While it's not without merit, it has been proven again and again that physical storage is much more prone to data breaches and theft than encrypted information stored in the cloud. Still, we're not quite at the tipping point in the cloud versus physical storage debate; but I suspect it'll happen sooner rather than later.

Futuristic Takes on What the Data Storage Could Look Like

An online backup company called Backblaze is trying to find answers to the question of how long a typical hard drive could last. After running 25,000 hard drives simultaneously for testing purposes, the current attrition rate is approximately 22 percent after just four years. Some may last decades, others will fail within the first year, but the hard truth is that modern drives aren't built to last forever - and they won't.

This sort of failure rate leads to a search for more reliable storage methods, and here are two of the most exciting.

Holographic Data Storage

Current storage technologies are dependent on magnets or optical means in which to write information, one bit at a time, on the surface of an object.

Holographic data storage wants to make the leap to recording information throughout the volume of the storage media. The technology is capable of reading and writing millions of bits in parallel, as opposed to the bit-by-bit approach which could lead to astronomically high amounts of data capacity when compared to modern storage means.

DNA Storage

In the scientific journal Nature, an article by researchers from the European Bioinformatics Institute (EBI) detailed the successful storage of 5 million bits of data containing text and audio were successfully retrieved and reproduced from a single DNA molecule about the size of a speck of dust. The retrieved data consisted of a 26-second audio clip of the "I Have a Dream Speech," all of Shakespeare's 154 sonnets, a photograph of the EBI headquarters in the UK, a well-known paper on the structure of DNA by James Watson and Francis Crick and a file describing the methods used to encode and convert the data.

Theories have surrounded the use of DNA as a data storage tool for some time now, but the main issue has been the rapid breakdown of DNA in tissue when not stored in a controlled environment. This, however, may have been solved with a recent breakthrough.

Additional findings from a study detailing the long-term stability of data encoded in DNA were published in an article by researchers from ETH Zurich. Inside the study, researchers found that encapsulating the DNA in glass spheres could protect the data and allow for error-free recovery for up to 1 million years at temperatures of –18 degrees Celsius and 2000 years if stored at 10 degrees Celsius.

The technology is quite exciting and if estimates are correct, that each cubic millimeter of DNA can hold 5.5 Petabits of data, then it could be a real breakthrough in terms of long-term data storage and recovery. Right now, the technology is cost prohibitive, requiring approximately $12,000 dollars per MB to encode the data and another $220 dollars to retrieve it.

While both of these technologies open the door to what the future could hold, they're still very new and largely speculative at this point. The truth is, we aren't quite sure what the future of data storage holds, but that doesn't make it any less exciting to think about.

How many of these storage devices have you used? Which ones are you most excited about (of those listed - or others) for the future? We'd love to know what you think in the comments below.

Photo credit: IBM Copy Card by Arnold Reinhold, Paper Tape by Poil, Selectron Tube by David Monniaux, Magnetic-core Memory by Steve Jurvetson, Compact Cassette by Hans Haase, Floppy Disk 8-inch vs 3-inch by Thomas Bohl, Laserdisc / DVD Comparison by Kevin586, HDD 80GB IBM by Krzut, CDs by Silver Spoon, DVD Two Kinds, Memory Card Comparison by Evan-Amos all via Wikimedia Commons, Server Room by Torkild Retvedt via Flickr, Smart TV via Shutterstock, Herman Hollerith, head-and-shoulders portrait