Software piracy and file sharing existed well before the internet as we know it today, mainly through message boards and private FTP sites. But it was tedious to find files, and even slower to actually download them. It was more common to get your software or music fix from a friend as a physical copy (often called the "sneakernet").

P2P file sharing changed all that. Suddenly you had a direct line of access to other people's shared data. But let's back up a little: what is P2P, how does it work, and where did it start?

Before We Start

Of course, peer-to-peer file sharing technology isn't only used for piracy. But if we're honest, that's why it was created in the first place.

We'll talk mostly about the file-sharing aspect of P2P technologies, but this certainly isn't the only use case. We should also note that the term P2P covers a broad range of networks over the past few decades since they were first invented, so not everything here applies in every case. We've tried to tackle the topic as broadly as possible.

Not the Client-Server Model

First, we should explain what peer-to-peer isn't. The rest of the internet generally runs on what's called a client-server model.

A website hosted on a powerful server somewhere in the world (the best web hosting services), delivers a piece of information when your computer or phone requests it. This might be a font used to display the website correctly, or it could be a 2GB Linux ISO you want to download. The server sends the file to you. When the next user comes along, the process repeats.

This works well for websites, but doesn't scale well for distributing large files. It's mainly a problem of speed, bandwidth, cost, and legality.

Speed on a traditional web host is quite limited. It's fine for transmitting small amounts of text to render a website, and some web servers are optimized just to serve images. But for larger files, that would require a burst of speed that isn't sustainable for long periods and locks the server up for other users. Bandwidth is also costly; just to serve the images here at MakeUseOf costs many thousands of dollars a year.

From a legal perspective, it's relatively easy to locate a single server, shut it down, then prosecute the owner. P2P was therefore born of necessity. Those who wanted to distribute copyrighted files needed a better way.

What Is Peer-to-Peer?

Peer-to-peer is an entirely different model, in which everyone becomes a server. There is no central server; everyone who uses the network acts as their own server. Instead of simply taking files, peer-to-peer made it a two-way street.

You could now give back to other users. In fact, giving back (known as "seeding" nowadays) is critical to the success of peer-to-peer networks. If everyone just downloaded without giving anything back (called "leeching"), the network would offer no benefits over a client-server model.

In the client-server model, performance degrades with more users, as the same amount of bandwidth is shared among more people. In peer-to-peer networks, more users make the network more effective. The more users that make a particular file available from their hard drives, the easier it is for new users to get that file.

In modern P2P networks, it's actually faster when more users download a file. Instead of taking the whole file from one user, you're taking smaller pieces from hundreds or thousands of others. Even if they only have a little bandwidth to spare for you, the combined connections mean you get the maximum speed possible. Then you, in turn, contribute to distribute the file again.

In earlier forms of P2P networks, a central server was still necessary to organize the network, acting as a database that held information on connected users and files available in the system. Though the heavy lifting of file transfers was done directly between users, the networks were still vulnerable. Knocking out that central server meant disabling communications completely.

This is no longer the case thanks to recent developments. Nowadays, the software can ask peers directly if they've seen a particular file. There is no way to knock out these networks---they are effectively indestructible.

A Brief History of Early P2P Software

Now you have an idea of why peer-to-peer networks were such a revolution compared to the client-server model, let's take a quick look at the historical context.

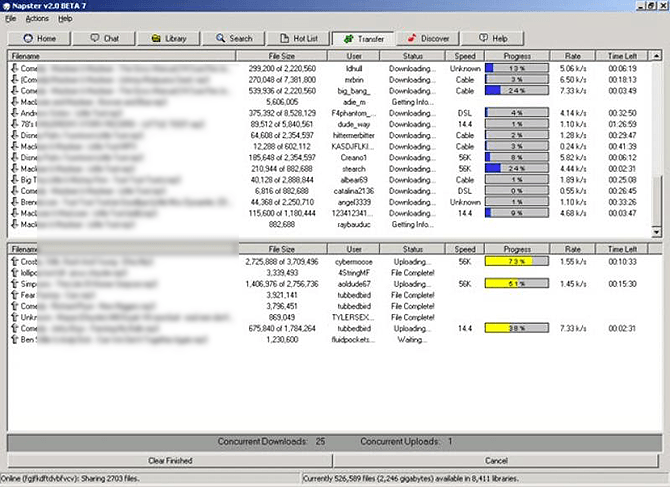

Napster, launched in 1999, was the first widely available implementation of a peer-to-peer model. A central database contained information about all the music files held by members. You would search for a song from this central server, but to download it, you would actually connect to another online user and copy from them. In turn, once you had that song in your Napster library, it became available as a source for others on the network.

You could also add your own files, which Napster would then index and add to the database, ready to propagate across the world. The implementation was limited in that you could only download from one person, however. The service had a high availability of songs, but speeds were not so great.

But with that, the concept of peer-to-peer had unleashed on the world.

Napster was eventually shut down in 2001, but not before similar networks arose that offered more than just music. Movies, software, and images were made available on Morpheus, Kazaa, and Gnutella networks (of those, Limewire was perhaps the most famous Gnutella client).

Over the years, various other protocols and peer-to-peer file sharing software came and went, but one open protocol took hold: BitTorrent.

The BitTorrent Protocol

Designed in 2001, BitTorrent is an open source protocol where users create a meta file (called a .torrent file) containing information about the download, without actually providing the download data itself. A tracker was necessary to store these meta files, along with who currently held that file. However, as an open protocol, anyone could program the client or tracker software.

So even though it needed a central tracker to maintain the databases of those available files, multiple trackers could exist. Any single torrent descriptor file could register with multiple trackers. This made the BitTorrent network incredibly robust and almost impossible to completely destroy. Shutting down torrent sites became a game of whack-a-mole. In its lifetime, The Pirate Bay was killed and resurrected multiple times.

Since the original design, further improvements were made that enabled tracker-less downloads. DHT (distributed hash table) meant the job of indexing available files could distribute among all users. Magnet links are another, but they're complex enough to warrant an explanation of how magnet links differ from torrent files.

Do You Use P2P File Sharing?

I hope this has shed some light on the meaning of peer-to-peer networking and where it began. It's fair to say P2P networks changed the internet forever. At their peak in 2006, it was estimated that P2P networks collectively accounted for over 70% of all traffic flowing across the internet.

Since then usage has plummeted, mainly due to easily accessible video streaming services such as Netflix and YouTube. Combined with music streaming services like Spotify, there's really no reason to pirate anymore. P2P networks filled an important gap in our history when traditional media services struggled to keep up. Now, they're largely irrelevant.

Did you get a chance to use Napster back in the day? Or was your first introduction to file sharing through the humble torrent? Tell us in the comments, or if you want to learn more, check out our complete beginner's guide to torrents.

Image Credit: chromatika2/Depositphotos