OrCam announced the world's first AI-driven wearable assistive device for people with hearing impairment. The OrCam Hear just received one of CES's coveted Best of Innovation awards, acknowledging its leadership in the field. We had a chance to speak to OrCam's Leon Paull about the new device.

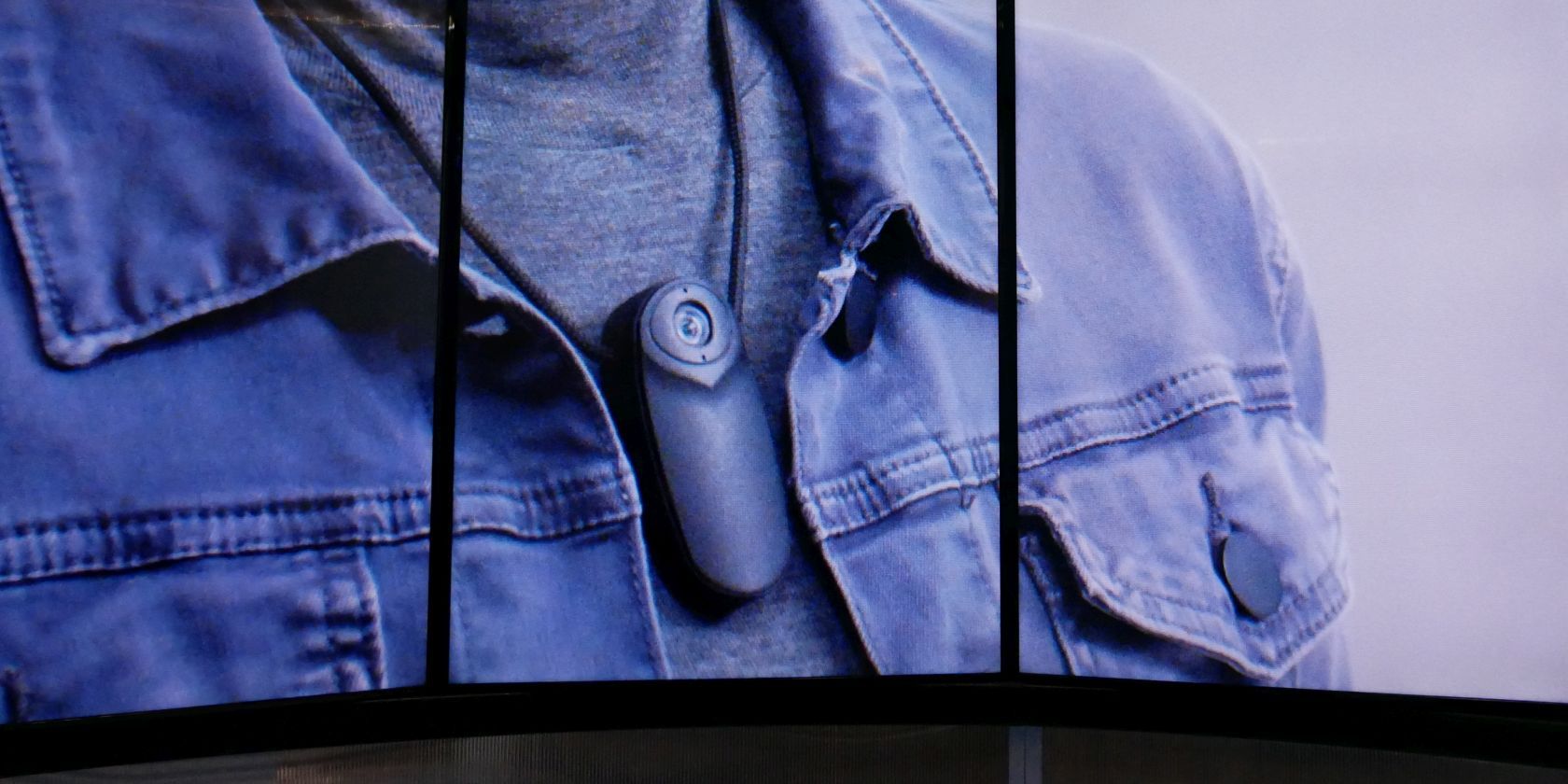

Wearable hearing aids have been around for decades. What they still struggle with is background noise. To solve the so-called "cocktail party effect", OrCam designed an AI that can identify a speaker and filter their voice from a group of voices. The OrCam Hear is an add-on device that pairs with conventional hearing aids using Bluetooth to relay isolated speech in real time.

How does the OrCam Hear know which voice to isolate? Basically, it can identify lip movements and body gestures. As the wearer is looking at a speaker, the OrCam Hear recognizes lip movements and at the same time separates the source of the voice. When the listener changes their position to hear another person, the device seamlessly switches to the new speaker in focus. The OrCam Hear requires neither a smartphone, nor an internet connection; all data is processed offline and thus remains private.

Unfortunately, we were not able to try out the device ourselves. OrCam's Leon Paull told us that they are in the final stages of development and expect to have a final product this year. However, they are aiming to partner with hearing aid manufacturers, since this is an add-on device.