Computing history is full of Flops.

The Apple III had a nasty habit of cooking itself in its deformed shell. The Atari Jaguar, an 'innovative' games console that had some spurious claims about its performance, just couldn't grab the market. Intel's flagship Pentium chip designed for high performance accounting applications had difficulty with decimal numbers.

But the other kind of flop that prevails in the world of computing is the FLOPS measurement, long hailed as a reasonably fair comparison between different machines, architectures, and systems.

FLOPS is a measure of Floating-point Operations per Second. Put simply, it's the speedometer for a computing system. And it's been growing exponentially for decades.

So what if I told you that in a few years, you'll have a system sitting on your desk, or in your TV, or in your phone, that would wipe the floor of today's supercomputers? Incredible? I'm a madman? Have a look at history before you judge.

Supercomputer to Supermarket

A recent Intel i7 Haswell processor can perform about 177 billion FLOPS (GFLOPS), which is faster than the fastest supercomputer in the US in 1994, the Sandia National Labs XP/s140 with 3,680 computing cores working together.

A PlayStation 4 can operate at around 1.8 Trillion FLOPS thanks to its advanced Cell micro-architecture, and would have trumped the $55 million ASCI Red supercomputer that topped the worldwide supercomputer league in 1998, nearly 15 years before the PS4 was released.

IBM's Watson AI System has a (current) peak operation 80 TFLOPS, and that's nowhere near close to letting it into the Top 500 list of today's supercomputers, with the Chinese Tianhe-2 heading the Top 500 on the past 3 consecutive occasions, with a peak performance of 54,902 TFLOPS, or nearly 55 Peta-FLOPS.

The big question is, where is the next desktop-size supercomputer going to come from? And more importantly, when are we getting it?

Another Brick in the Power Wall

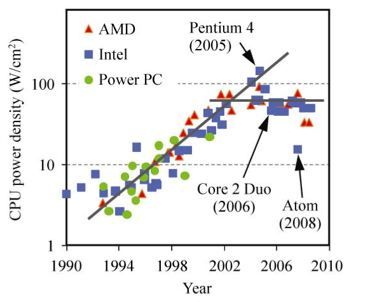

In recent history, the driving forces between these impressive gains in speed have been in material science and architecture design; smaller nanometer scale manufacturing processes mean that chips can be thinner, faster, and dump less energy out in the form of heat, which makes them cheaper to run.

Also, with the development of multi-core architectures during the late 2000's, many 'processors' are now squeezed onto a single chip. This technology, combined with the increasing maturity of distributed computation systems, where many 'computers' can operate as a single machine, means that the Top 500 has always been growing, just about keeping pace with Moore's famous Law.

However, the laws of physics are starting to get in the way of all this growth, even Intel is worried about it, and many across the world are hunting for the next thing.

…in about ten years or so, we will see the collapse of Moore's Law. In fact, already, we see a slowing down of Moore's Law. Computer power simply cannot maintain its rapid exponential rise using standard silicon technology. - Dr. Michio Kaku - 2012

The fundamental problem with current processing design is that the transistors are either on (1) or off (0). Each time a transistor gate 'flips', it has to expel a certain amount of energy into the material that the gate is made of to make that 'flip' stay. As these gates get smaller and smaller, the ratio between the energy to use the transistor and the energy to 'flip' the transistor gets bigger and bigger, creating major heating and reliability problems. Current systems are approaching - and in some cases exceeding - the raw heat density of nuclear reactors, and materials are starting to fail their designers. This is classically called the 'Power Wall' [Broken URL Removed].

Recently, some have started to think differently about how to perform useful computations. Two companies in particular have caught our attention in terms of advanced forms of quantum and optical computing. Canadian D-Wave Systems and UK based Optalysys, who both have extremely different approaches to very different problem sets.

Time to Change the Music

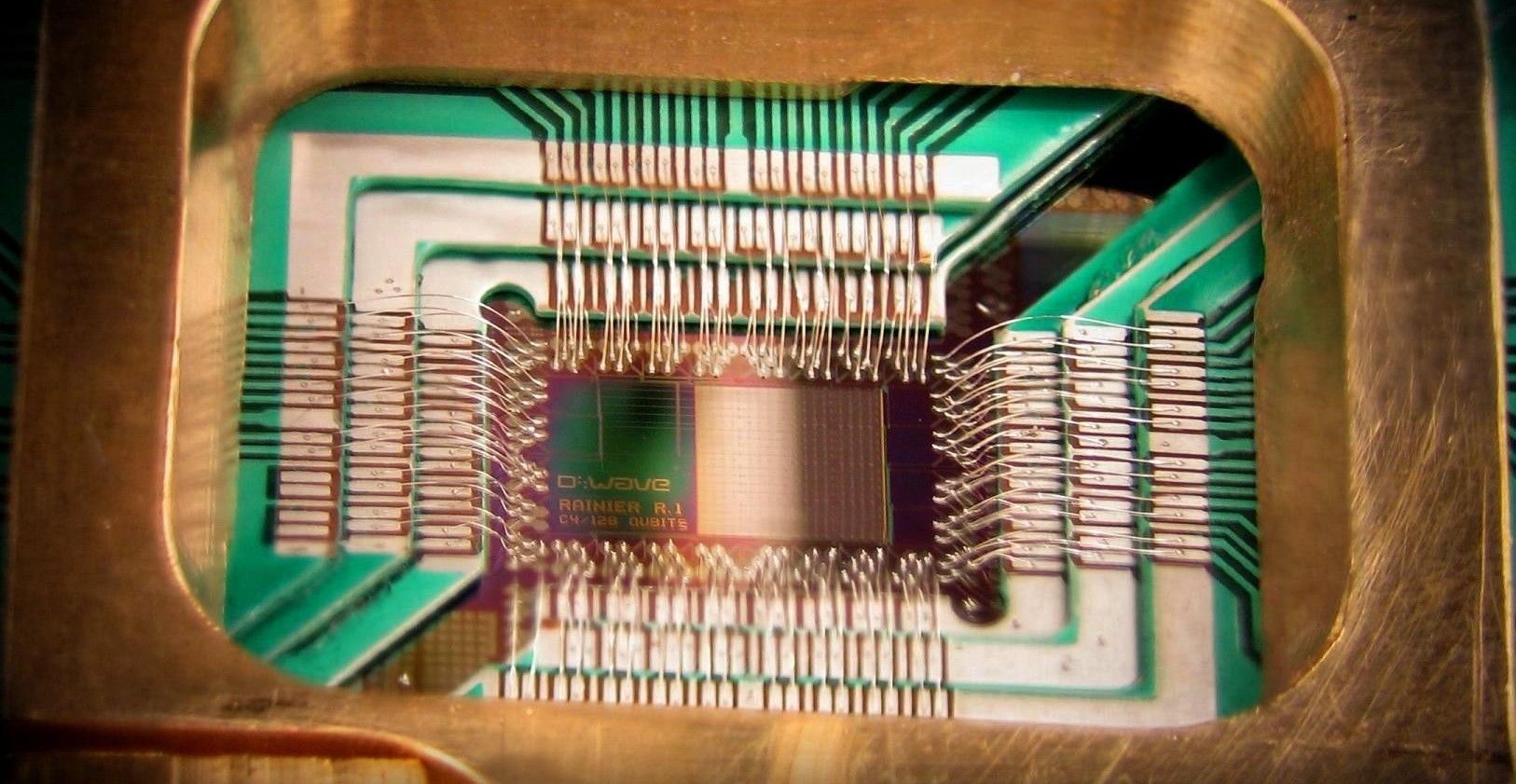

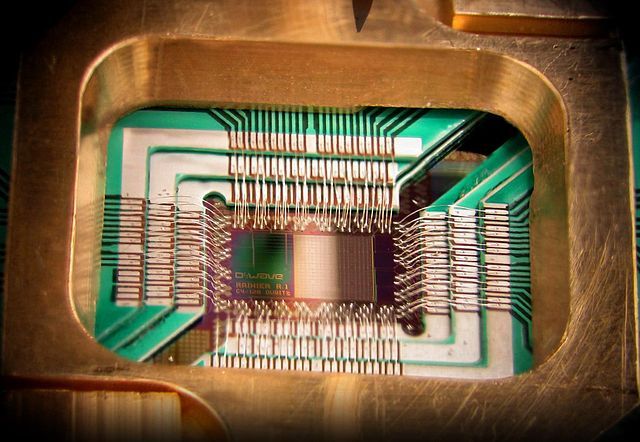

D-Wave got a lot of press lately, with their super-cooled ominous black box with an extremely cyberpunk interior-spike, containing an enigmatic naked-chip with hard-to-imagine powers.

In essence, the D2 system takes a completely different approach to problem solving by effectively throwing out the cause-and-effect rule book. So what kind of problems is this Google/NASA/Lockheed Martin supported behemoth aiming at?

The Rambling Man

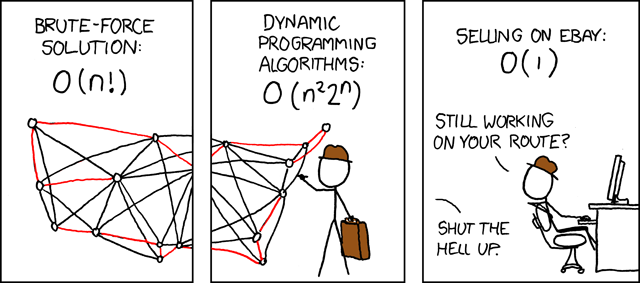

Historically, if you want to solve an NP-Hard or Intermediate problem, where there are an extremely high number of possible solutions that have a wide range of potential, using 'values' the classical approach simply doesn't work. Take for instance the Travelling Salesman problem; given N-cities, find the shortest path to visit all cities once. It's important to note that TSP is a major factor in many fields like microchip manufacture, logistics, and even DNA sequencing,

But all these problems boil down to an apparently simple process; Pick a point to start from, generate a route around N 'things', measure the distance, and if there's an existing route that's shorter than it, discard the attempted route and move on to the next until there are no more routes to check.

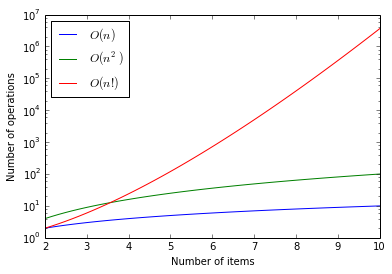

This sounds easy, and for small values, it is; for 3 cities there are 3*2*1 = 6 routes to check, for 7 cities there are 7*6*5*4*3*2*1 = 5040, which isn't too bad for a computer to handle. This is a Factorial sequence, and can be expressed as "N!", so 5040 is 7!.

However, by the time you go just a little further, to 10 cities to visit, you need to test over 3 Million routes. By the time you get to 100, the number of routes you need to check is 9 followed by 157 digits. The only way to look at these kind of functions is using a logarithmic graph, where the y-axis starts off at 1 (10^0), 10 (10^1), 100 (10^2), 1000 (10^3) and so on.

The numbers just get too big to be able to reasonably process on any machine that exists today or can exist using classical computing architectures. But what D-Wave is doing is very different.

Vesuvius Emerges

The Vesuvius chip in the D2 uses around 500 'qubits' or Quantum Bits to perform these calculations using a method called Quantum Annealing. Rather than measuring each route at a time, the Vesuvius Qubits are set into a superposition state (neither on nor off, operating together as a kind of potential field) and a series of increasingly complex algebraic descriptions of the solution (i.e. a series of Hamiltonian descriptions of the solution, not a solution itself) are applied to the superposition field.

In effect, the system is testing the suitability of every potential solution simultaneously, like a ball 'deciding' what way to go down a hill. When the superposition is relaxed into a ground state, that ground state of the qubits should describe the optimum solution.

Many have questioned how much of an advantage the D-Wave system gives over a conventional computer. In a recent test of the platform against a typical Travelling Saleman Problem, which took 30 minutes for a classical computer, took just half a second on the Vesuvius.

However, to be clear, this is never going to be a system you play Doom on. Some commentators are trying to compare this highly specialised system against a general purpose processor. You would be better off comparing an Ohio-class submarine with the F35 Lightning; any metric you select for one is so inappropriate for the other as to be useless.

The D-Wave is clocking in at several orders of magnitude faster for its specific problems compared to a standard processor, and FLOPS estimates range from a relatively impressive 420 GFLOPS to a mind-blowing 1.5 Peta-FLOPS (Putting it in the Top 10 Supercomputer list in 2013 at the time of the last public prototype). If anything, this disparity highlights the beginning of the end of FLOPS as a universal measurement when applied to specific problem areas.

This area of computing is aimed at a very specific (and very interesting) set of problems. Worryingly, one of the problems within this sphere is cryptography - specifically Public Key Cryptography.

Thankfully D-Wave's implementation appears focused on optimisation algorithms, and D-Wave made some design decisions (such as the hierarchical peering structure on the chip) that indicate that you could not use the Vesuvius to solve Shor's Algorithm, which would potentially unlock the Internet so badly it'd make Robert Redford proud.

Laser Maths

The second company on our list is Optalysys. This UK based company takes computing and turns it on its head using analogue superposition of light to perform certain classes of computation using the nature of light itself. The below video demonstrates some of the background and fundamentals of the Optalysys system, presented by Prof. Heinz Wolff.

http://www.youtube.com/watch?v=T2yQ9xFshuc

It's a bit hand-wavey, but in essence, it's a box that will hopefully one day sit on your desk and provide computation support for simulations, CAD/CAM and medical imaging (and maybe, just maybe, computer games). Like the Vesuvius, there's no way that the Optalysys solution is going to perform mainstream computing tasks, but that's not what it's designed for.

A useful way to think about this style of optical processing is to think of it like a physical Graphics Processing Unit (GPU). Modern GPU's use many many streaming processors in parallel, performing the same computation on different data coming in from different areas of memory. This architecture came as a natural result of the way the computer graphics are generated, but this massively parallel architecture has been used for everything from high frequency trading, to Artificial Neural Networks.

Optalsys takes similar principles and translates them into a physical medium; data partitioning becomes beam splitting, linear algebra becomes quantum interference, MapReduce style functions become optical filtering systems. And all of these functions operate in constant, effectively instantaneous, time.

The initial prototype device uses a 20Hz 500x500 element grid to perform Fast Fourier Transformations (basically, "what frequencies appear in this input stream?") and has delivered an underwhelming equivalent of 40 GFLOPS. Developers are targeting a 340 GFLOPS system by next year, which considering the estimated power consumption, would be an impressive score.

So Where is My Black Box?

The history of computing shows us that what is initially the reserve of research labs and government agencies quickly makes its way into consumer hardware. Unfortunately, the history of computing hasn't had to deal with the limitations of the laws of physics, yet.

Personally, I don't think D-Wave and Optalysys are going to be the exact technologies we have on our desks in 5-10 years time. Consider that the first recognizable "Smart Watch" was unveiled in 2000 and failed miserably; but the essence of the technology continues on today. Likewise, these explorations into Quantum and Optical computing accelerators will probably end up as footnotes in 'the next big thing'.

Materials science is edging closer to biological computers, using DNA-like structures to perform math. Nanotechnology and 'Programmable Matter' is approaching the point were rather than processing 'data', material itself will both contain, represent, and process information.

All in all, it's a brave new world for a computational scientist. Where do you think this is all going? Let's chat about it in the comments!

Photo credits:KL Intel Pentium A80501 by Konstantin Lanzet, Asci red - tflop4m by US Government - Sandia National Laboratories, DWave D2 by The Vancouver Sun, DWave 128chip by D-Wave Systems, Inc., Travelling Salesman Problem by Randall Munroe (XKCD)