Sometimes it's just not enough to save a website locally from your browser. Sometimes you need a little bit more power. For this, there's a neat little command line tool known as Wget. Wget is a simple program which is able to download files from the Internet. You may or may not know much about Wget already, but after reading this article you'll be prepared to use it for all sorts of tricks.

Wget is available to use natively in UNIX and Windows command-line, but it's possible to install wget on Mac OS X with a bit of coaxing. So, once you know the sorts of things you can use Wget for, it is portable to whichever OS you're using - and that's handy. What's even better is that wget can be used in batch files and cron jobs. This is where we start seeing the real power behind wget.

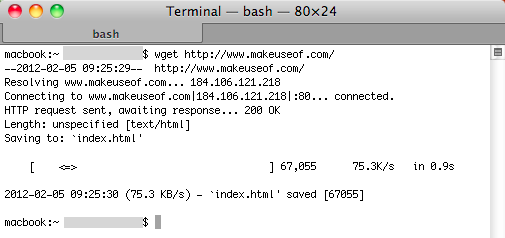

Basic Wget

The basic usage is wget URL.

wget https://www.makeuseof.com/

The most simple options most people need to know are background (wget -b), continue partial download (wget -c), number of tries (wget --tries=NUMBER) and of course help (wget -h) to remind yourself of all the options.

wget -b -c --tries=NUMBER URL

Moderately Advanced Wget Options

Wget can also run in the background (wget -b), limit the speed of the download (wget –limit-rate=SPEED), no parent to ensure you only download a sub-directory (wget -np), update only changed files (wget -N), mirror a site (wget -m), ensure no new directories are created (wget -nd), accept only certain extensions (wget --accept=LIST) and set a wait time (wget --wait=SECONDS).

wget -b --limit-rate=SPEED -np -N -m -nd --accept=LIST --wait=SECONDS URL

Download With Wget Recursively

You can recursively download (wget -r), span hosts to other domains (wget -H), convert links to local versions (wget --convert-links) and set the level of recursions (wget --level=NUMBER using inf or 0 for infinite).

But some sites don't want to let you download recursively and will check which browser you are using in an attempt to block the bot. To get around this, declare a user agent such as Mozilla (wget --user-agent=AGENT).

wget -r -H --convert-links --level=NUMBER --user-agent=AGENT URL

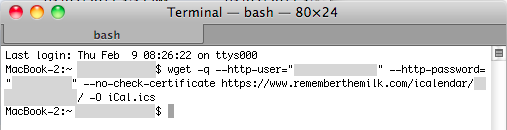

Password Protected Wget

It's possible to declare the username and password for a particular URL while using wget (wget --http-user=USER --http-password=PASS). This isn't recommended on shared machines as anyone viewing the processes will be able to see the password in plain text.

wget --http-user=USER --http-password=PASS URL

An example of this in action is using wget to back up your tasks from Remember The Milk.

Wget Bulk Download

First, create a text file of all the URLs you want to download using wget and call it wget_downloads.txt. Then to download URLs in bulk, type in this command:

wget -i wget_downloads.txt

Cool Uses For Wget

This will crawl a website and generate a log file of any broken links:

wget --spider -o wget.log -e robots=off --wait 1 -r -p http://www.mysite.com/

This will take a text file of your favourite music blogs and download any new MP3 files:

wget -r --level=1 -H --timeout=1 -nd -N -np --accept=mp3 -e robots=off -i musicblogs.txt

What else do you use wget for?

Image Credit: Social Media Connection via ShutterStock [Broken URL Removed], Young Man Watching TV via Shutterstock [Broken URL Removed], Globe via Shutterstock