IBM has built something remarkable. Announced last week via an article in Science, "TrueNorth" is what's known as a 'neuromorphic chip' -- a computer chip designed to imitate biological neurons, for use in intelligent computer systems like Watson.

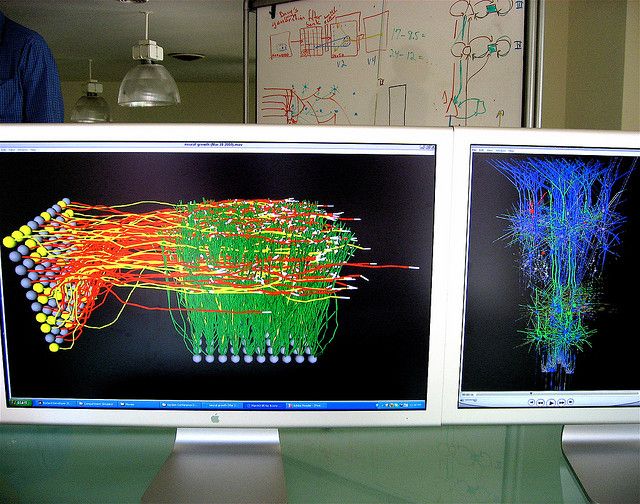

The chip, manufactured by Samsung using 28 nm processes, has 4096 specialized cores, and can simulate one million human-like neurons, with 256 synapses each. The neurons simulated by the chip are 'spiking neurons' -- a more detailed, biological model of neurons than those usually used in machine learning, which encode information in the timing and frequency of pulses moving from neurons to neuron.

The chip can only simulate about a quarter as many neurons as are found in the cerebral cortex of a typical mouse, but is already capable of impressive feats of pattern recognition when combined with a traditional computer system.

What is TrueNorth?

TrueNorth is the product of an ambitious project brewing in IBM since 2011, which has the long term goal of constructing a neuromorphic device capable of human-like intellect that can fit in the space of the human brain and consume a similar amount of energy (about twenty watts, or 1/7th of the energy used by a Pentium 4). The research was partially funded by DARPA, the DoD's research arm, as part of their AI initiative, 'SYNAPSE'. If you've seen Terminator 2, it wouldn't be unfair to draw a comparison.

TrueNorth is a bold first step towards that vision, although there's a long way to go -- the human cerebral cortex contains 20 billion neurons, 20,000 times more than IBM's chip. Many features of the architecture of certain regions of the brain are still not well understood. Complicating matters, the chip itself only executes the behavior of the network: training the network (figuring out the synaptic connections that make the network do what you want) is currently handled using traditional computer processors, although IBM would like to move that function onto the chip someday.

IBM has tested the chip on a number of standard machine intelligence benchmarks, including an image recognition task designed by DARPA, at which their chip scores about 80% accuracy. That's a very good score -- although what's more impressive is that the chip was able to perform this feat in real time, processing thirty images a second, and consumed only 63 milliwatts to do it - about seven times less than the energy demand of a typical nightlight.

If you simply stacked 20,000 of these chips to equal the human brain's signal-crunching power by brute force, the result would be a server that consumed only a little more energy than a typical electric kettle - almost a hundred fold more than the brain, but not a prohibitive amount by any means. This stands in contrast to the modest supercomputer you'd normally need to achieve the same task. Dharmendra Modha, an IBM Fellow, put it this way:

To underscore this divergence between the brain and today’s computers, note that a 'human-scale' simulation with 100 trillion synapses required 96 Blue Gene/Q racks of the Lawrence Livermore National Lab Sequoia supercomputer"

So what does this chip actually mean for the future of AI applications?

Well, this chip is just the start: there's significant progress to be made for increasing the density and connectedness of neurons, bringing power consumption even lower, and building larger and larger populations of interconnected neurons. There's also the possibility of using dedicated hardware to train the network, which could open up massive improvements in the accuracy of the network's behavior.

However, this sort of technology will begin finding its way into applications in the relatively near future: the chip is small enough and consumes little enough power that multi-million neuron networks can be embedded into devices like autonomous robots and smartphones, accelerating the burgeoning ambient intelligence revolution.

IBM also hopes to install chips containing perhaps billions of neurons into its Watson computer systems, allowing Watson's software to hand off difficult problems to hardware neural networks and get back solutions, making Watson units cheaper, smaller, and smarter - ideally, you could eventually run a system like Watson on every smartphone.

Intelligence is rapidly becoming one of the most valuable commodities in computing, and making it cheaper to operate intelligent systems is going to have a huge impact on where those systems can be deployed and what for. There are risks to this sort of progress, of course, but the value is also potentially enormous. Computer intelligence is likely to change every aspect of our lives in the coming years, and this sort of research will make it cheaper and more powerful.

Image credits: "IBM Think Card", by Stephen Coles, "IBM Blue Gene/P", by Argonne National Labs, "Brainstorm" by Steve Jurvetson, agsandrew/Shutterstock