Google recently released a concept video for their Project Glass, and the media world has jumped on it as the next revolution in computing, while some are doubting the technical aspects of it. Today I'd like to take a closer look at the technical feasibility of these real-life Google Goggles; what we know; and why this concept video should perhaps be taken with a grain of salt. I won't be getting into ethics, or why these might be the best thing since slice bread.

Bear in mind I'm certainly no expert on the topic, but I've done a good bit of research and would like to present two possibilities.

First, here's the concept video in full, for those of you who haven't seen it.

http://www.youtube.com/watch?v=9c6W4CCU9M4

And while we're on it, here's Tom Scott's hilarious take on the matter:

http://www.youtube.com/watch?v=t3TAOYXT840

Full Field of Vision Display

The main issue with the concept video is the way it implies a display could be overlayed upon the users entire field of vision. However, this is quite unlikely with current LCD technologies. Wired asked some industry experts, and they all say the same thing - the product images we've seen, and the capabilities of current technology suggest that what is portrayed in the video is unlikely. Or is it?

LCD Displays and Transparent AMOLEDs

You should know first that simply making a pair of glasses with transparent LCD screens for eyepieces wouldn't work because our eyes are biologically incapable of focusing on them. If the screens were about 10cm away (try it with your phone), you could theoretically shift focus between the screens and the real world distant object, but it would be painful to use and impractical. A solution is needed that allows them to both be closer to your eye (as in, in a pair of glasses) and focused to infinity so that the image is clear no matter where you look.

What most industry experts are assuming therefore is that the glasses use some form of miniature AMOLED or LCD display, combined with some optics to allow the output to be displayed at infinite focus. This is the method currently employed by existing commercial products, such as these ski goggles:

However, this method only allows a display to occupy a small part of your field of vision, but certainly not all of it.

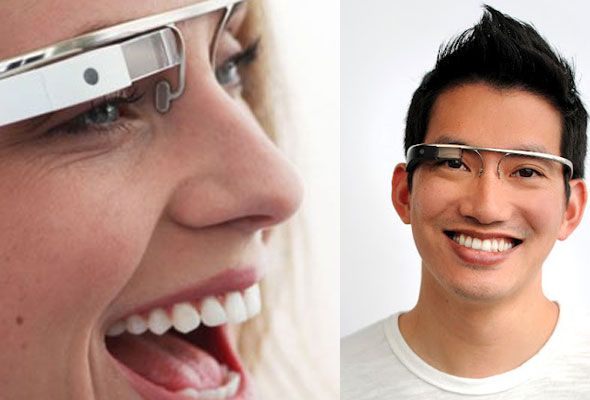

Looking at both the recent product images:

And the photograph from Robert Scoble:

We can say that this method is certainly a possibility - a small screen, off to the corner of your vision. If this is the method Google has chosen, then we can safely say that what is portrayed in the concept video is impossible - the glasses simply wouldn't be capable of placing something in the center of your field of vision.

Virtual Retinal Displays

However, this isn't the only way it could be achieved. To overcome the problem of having to actually focus on an image, it is possible instead to simply beam the image directly onto our retina - a so called Virtual Retinal Display - bypassing the need for a screen altogether. In this scenario, a small laser projector would be needed, again consistent with what we've seen so far. Though rare, Japanese manufacturer Brother (using technology based on their knowledge of laser and inkjet printers!) did in fact demonstrate a working prototype called AirScouter back in late 2010, in which a 16 inch screen was perceived by projecting the image directly onto the wearer's retina, whilst still allowing the background to be viewed. This video explains:

http://www.youtube.com/watch?v=9I0hF0cbw8E

Notice that toward the end of the video, they even demonstrate Augmented Reality directions by proposing a cellphone connection, remarkably similar to the Project Glass concept.

The Rest

Once the display is sorted, the rest is trivial.

Mobile data connections are fast enough nowadays to provide fairly instant and data-rich feedback on locations and objects.

Cameras are tiny and one could easily be embedded into the frame of the 'glasses', transmitting images for recognition purposes as the Google Goggles application currently does in Android phones.

A CPU and battery - I suspect these would be too bulky to fit on the frame with current technology, but it wouldn't be unreasonable to expect to users to carry the bulk of the device somewhere else on their person. I'm not suggesting the glasses link together with a separate Android phone, but phone-like functionality was in the concept and this would be one way to solve the bulk issue. Either way, there's no technological barrier.

Verdict?

If I'm completely honest, I began researching this article as a total skeptic - sure that the whole field of vision display portrayed in the concept video was nonsense, and aiming to debunk it. However, I'm now convinced that crucially by using a retinal projection system, this could actually be a reality. The technology is there - it was there a year ago - and Google has enough research cash to make this into a viable, commercial product - combined with their immense database of information for Augmented Reality applications.

What is it that makes me so convinced it isn't just a transparent mini-screen? This photograph from Thomas Hawke, taken at a recent Dining in the Dark charity event - which clearly shows it's more like a block of glass - a lens - which would be used to refract a projected image.

You know the excitement an Apple fanboy gets when it's time for another keynote? Right now, I'm feeling that for you Google - please don't let me down.

Comments are welcome, but there are more articles to come on the topic so let's keep the conversation purely on the side of technical feasibility if possible.