We can talk to almost all of our gadgets now, but exactly how does it work? When you ask "What song is this?" or say "Call Mom", a miracle of modern tech is happening. And while it feels like it's on the cutting edge, this idea of talking to devices goes back decades -- almost as far as jetpacks in science fiction!

Today, the bulk of the attention given to voice-driven computing is on smartphones. Apple, Amazon, Microsoft, and Google are at the top of the chain, each one offering its own way to talk to electronics. You known who they are: Siri, Alexa, Cortana, and the nameless "Ok, Google" being. Which raises a big question...

How does a device take spoken words and turn them into commands it can understand? In essence, it comes down to pattern matching and making predictions based on those patterns. More specifically, voice recognition is a complex task comes from Acoustic Modeling and Language Modeling.

Acoustic Modeling: Waveforms & Phones

Acoustic Modeling is the process of taking a waveform of speech and analyzing it using statistical models. The most common method for this is Hidden Markov Modeling, which is used in what's called pronunciation modeling to break speech down into component parts called phones (not to be confused with actual phone devices). Microsoft has been a leading researcher in this field for many years.

Hidden Markov Modeling: Probability States

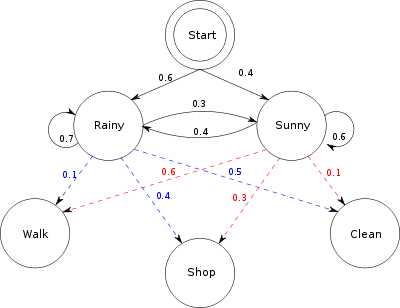

Hidden Markov Modeling is a predictive mathematical model where the current state is determined by analyzing the output. Wikipedia has a great example using two friends.

Imagine two friends -- Local Friend and Remote Friend -- who live in different cities. Local Friend wants to figure out what the weather is like where Remote Friend lives, but Remote Friend only wants to talk about what he did that day: walk, shop, or clean. The likelihood of each activity depending on the day's weather.

Pretend that this is the only information available. With it, Local Friend can find trends in how the weather changed from day to day, and using these trends, she can start making educated guesses about what today's weather will be based on her friend's activity yesterday. (You can see a diagram of the system above.)

If you want a more complex example, check out this example on Matlab. In voice recognition, this model essentially compares each part of the waveform against what comes before and what comes after, and against a dictionary of waveforms to figure out what's being said.

Essentially, if you make a "th" sound, it's going to check that sound against the most probable sounds that usually come before and after it. Maybe that means checking against the "e" sound, the "at" sound, and so on. When the pattern matches up correctly, it then has your whole word. This is an over-simplification, but you can see Microsoft's whole explanation here.

Language Modeling: More Than Sound

Acoustic Modeling goes a long way into helping your computer understand you, but what about homonyms and regional variations in pronunciation? That is where Language Modeling comes into play. Google has driven a lot of research in this area, mainly through the use of N-gram Modeling.

When Google is trying to understand your speech, it does so based on models derived from its massive bank of Voice Search and YouTube transcriptions. All of those hilariously wrong video captions have actually helped Google to evolve their dictionaries. Also, they used the departed GOOG-411 to collect information on how people speak.

All of this language collection created a vast array of pronunciations and dialects, which made for a robust dictionary of words and how they sound. This allows for matches that have a greatly reduced error rate than brute force matching based on raw probabilities. You can read a brief paper describing their methods here.

While Google is a leader in this field, there are other mathematical models being developed, including continuous space models and positional language models, which are more advanced techniques born from research in artificial intelligence. These methods are based on replicating the sort of reasoning humans do when listening to each other. These are much more advanced both in terms of the tech behind them, but also the math and programming needed to map out these models.

N-Gram Modeling: Probability Meets Memory

N-gram Modeling works based on probabilities, but it uses an existing dictionary of words to create a branching tree of possibilities, which is then smoothed out for the sake of efficiency. In a way, this means that N-gram Modeling does away with a lot of the uncertainty in the aforementioned Hidden Markov Modeling.

As noted above, this method's strength comes from having a large dictionary of words and usage, not just primitive sounds. This gives the program the ability to tell the difference between homophones, like "beat" and "beet". It's contextual, which means that when you're talking about last night's scores, the program isn't pulling up words about borscht.

But these models actually aren't the best for language, mainly due to issues with probabilities of words in longer phrases. As you add more words to a sentence, this model gets a bit off as your early words are unlikely to have loaded everything needed for your complete thought.

However, it is simple and easy to implement, making it a great match for a company like Google that enjoys throwing servers at computational problems. You can do further reading on N-gram Modelieng at the University of Washington, or you can watch a lecture at Coursera.

Shouting at Clouds: Apps & Devices

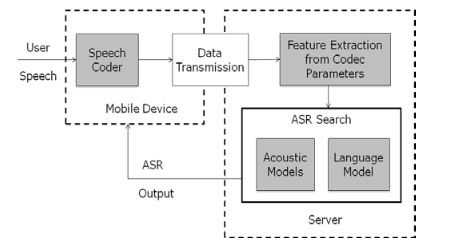

Anyone who's used Siri knows the frustration of a slow network connection. This is because your commands to Siri are sent over the network to be decoded by Apple. Cortana for Windows phone also requires a network connection to function properly. In contrast, however, Amazon's Echo is just a Bluetooth speaker without any Internet.

Why the difference? Because Siri and Cortana need heavy duty servers to decode your speech. Could it be done on your phone or tablet? Sure, but you'd kill your performance and battery life in the process. It just makes more sense to offload the processing to dedicated machines.

Think of it this way: your command is a car stuck in the mud. You could probably push it out yourself with enough time and effort, but it will take hours and leave you exhausted. Instead, you call roadside assistance and they pull your car out in just a few minutes. The downside is that you have to make the call and wait for them, but it's still faster and less taxing.

Desktop models like Nuance tend to use local resources due to the more powerful hardware. After all, in the words of Steve Jobs, your desktop is a truck. (Which makes it a bit silly that OS X is using servers for its processing.) So when you need to process language and voice, it's already equipped well enough to handle it on its own.

On the other hand, Android allows developers to include offline speech recognition in their apps. Google likes to get ahead of technology, and you can bet the other platforms will gain this ability as their hardware gets more powerful. No one likes it when poor coverage or bad reception lobotomizes their device.

Start Using Voice Commands Now

Now that you know the fundamental concepts, you should play around with your various devices. Try out the new voice typing in Google Docs. As if the Web office suite wasn't already powerful enough, voice control allows you to completely dictate and format your documents. This expands on the powerful tech they already designed for Chrome and Android.

Other ideas include setting up your Mac to use voice commands and setting up your Amazon Echo with automated checkout. Live in the future and embrace talking to your gadgets -- even if you're just ordering more paper towels. If you're a smartphone addict, we've also got tutorials for Siri, Cortana, and Android.

What is your favorite use of voice control? Let us know in the comments.

Image Credits: T-flex via Shutterstock, Terencehonles via Wikimedia Foundation, Arizona State, Cienpies Design via Shutterstock [Broken URL Removed]