Software is getting smart. It's a slow, uneven process -- but it's also seemingly unstoppable. One by one, the hard problems of machine learning are falling to powerful new theoretical tools, letting us build software that can do some truly impressive things.

Some applications, like self-driving cars, are a few years off. What you may not realize, though, is that machine learning is already all around you, and it can exert a surprising degree of influence over your life. Don't believe me? You might be surprised.

Let's start with an obvious example.

Content Recommendations

When you browse through Spotify or Netflix or Amazon's Kindle Store, machine learning algorithms are watching you. It's their job -- they need the information to give you recommendations, a piece of machine learning technology so ubiquitous that you may never have thought about it.

It's everywhere -- in all likelihood, most of the media you consumed over the last few years has been selected for you by these algorithms.

If you think about it, this sort of recommendation seems impossible. How does a computer program know you'll like The West Wing? Has it watched it? Does it feel the humanity of Martin Sheen's nuanced portrayal of president Bartlett? Does it get the jokes? Does it vaguely have the hots for Janel Moloney?

As it turns out, these algorithms do exactly none of these things. Instead, they rank content based entirely on usage. These algorithms ignore the substance of the content, and focus instead on what sort of people like it, and what else they tend to like.

By looking at what you already like, the algorithm can figure out which of its learned stereotypes you most resemble, and makes very accurate guesses about your tastes. Do you like The Daily Show, Cabin in the Woods, and House of Cards? Well, an awful large proportion of the people in that category like The West Wing. Odds are, you will too.

Interestingly, this previously-universal approach is starting to change, as we reach the limit of what you can figure out from usage patterns. There are real limits to what you can do with this kind of algorithm. Just for starters -- how do you rank new content that has no views yet?

There's also the issue of diminishing returns. Netflix is good at recommendations, but they aren't going to get much better using existing techniques. In 2009, Netflix had a one-million dollar competition to find a superior version of its recommendation algorithm, and the winner improved the recommendations by only about 10%. Since then, improvements have been even smaller. At some point, the only way to do much better would be to actually teach computers to understand art.

So, that's what tech companies are doing.

Last year, a Spotify intern named Sander Dieleman applied a powerful machine learning technology called "deep learning" to their database, allowing the program to learn to analyze music. The neural network automatically -- using nothing but raw audio data -- came to recognize distinctive patterns in the music.

One low-level neuron fired only in response to vibrato singing. Deeper in the network was a neuron that had learned to identify Christian rock. Another fired for chiptunes and eight bit music. Another fired only for Armin Van Buren. Many others were nameless but still expressed some meaningful property of the music.

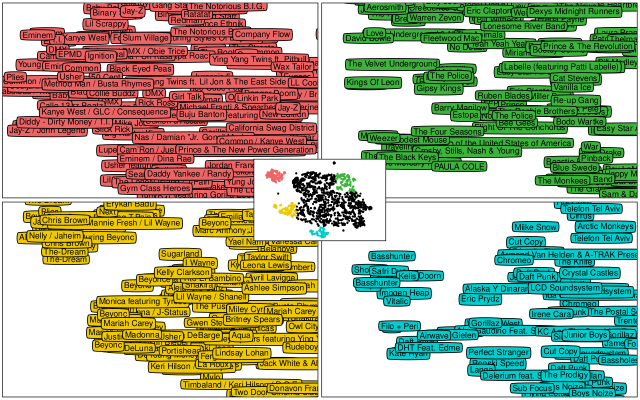

Here's a map Dieleman generated of every artist on Spotify, grouped by their similarity to one another.

(Seriously, the blog post about this is fascinating -- go read it).

All of these features together provide much richer grounds for recommendations, because the system can recommend songs, not just by who else likes them, but by their actual abstract properties. Spotify hasn't rolled this out to consumers yet, but it's only a matter of time. Right now, getting the most out of Spotify requires some specific tricks and know-how. In the future, it may happen automatically.

Could the same be done for, say, movies?

It's not out of the question. Google already has an algorithm that can understand a photograph well enough to describe it in English with a fair degree of accuracy. Google researcher Geoffrey Hinton, known as the "Father of Neural Networks," said in his Reddit AMA that he'll be disappointed if we don't have an algorithm that can describe the events of a movie within five years. That kind of analytical ability would be a lot of additional information that Netflix could use to make smarter movie recommendations.

High Frequency Trading

Another area that we don't often think about is algorithmic trading. In 2012, half of all stock market trades were made by computer programs. Why? Because humans are slow. Market events can happen on a timescale of milliseconds. Humans can't even interpret information that fast, much less act on them.

High frequency trading puts those financial decisions in the hands of computer algorithms that can predict the behavior of stocks, and buy and sell accordingly. While they lack the judgment of human traders, their speed gives them access to opportunities that are simply too fast for human beings.

Algorithmic trading affects your financial life in a variety of different ways. Your investments exist within a market that practically seethes with algorithms. They change the dynamics of markets, both in good and bad ways. They offer more liquidity, and a buffer against volatility, but they also introduce certain risks.

Algorithmic trading has introduced entirely new kinds of financial crime. In 2010, a single trader using a legion of automated algorithms in an attempt to illegally manipulate the market accidentally triggered a trillion-dollar market crash -- the stock market dropped by about 9% in a matter of minutes.

Ironically, the crash was worsened by legitimate trading algorithms dumping positions in response to the drop. Because many of them used similar algorithms at the time, they fed on one another, creating a negative feedback loop. Though the market recovered quickly, the astonishing fluctuation shows just how much control of the financial world we've ceded to these algorithms.

Advertising

Advertising is hard. Consumers are fickle and need to bribed, flattered, and otherwise manipulated into buying a product. There's a limit to how effectively you can manipulate people when you have to communicate with them en masse. People are different, and the same products and messages won't appeal to all of them.

Needless to say, the existence of the Internet and computers has fundamentally changed the game for advertisers. Now, advertisers can pinpoint a message to a specific person, figuring out exactly what they want and need. To do so, they rely on machine learning algorithms that can look at someone's browsing and purchasing habits, and make inferences about what they might buy in the future.

The power of these algorithms was shown off to stark effect in the infamous case, shared by Target statistician Andrew Pole, in which a Target manager was confronted by an irate father, complaining that his teenage daughter was being sent booklets of coupons designed for pregnant women. The manager apologized, and the father left. When the manager called to follow up, he was surprised to hear the father apologize, having discovered that Target's machine learning software was correct: his daughter was pregnant.

This was one of the incidents, according to Pole, that caused Target to begin to hide the effectiveness of its machine learning algorithms. According to Poole,

"We are very conservative about compliance with all privacy laws. But even if you’re following the law, you can do things where people get queasy. [...] Then we started mixing in all these ads for things we knew pregnant women would never buy, so the baby ads looked random. [...] And we found out that as long as a pregnant woman thinks she hasn’t been spied on, she’ll use the coupons. She just assumes that everyone else on her block got the same mailer for diapers and cribs. As long as we don’t spook her, it works."

In other words, the targeting algorithms are so powerful that Target has to actively hide their accuracy to avoid scaring customers. These algorithms can have a powerful impact on what we buy, and (when used correctly) they're completely invisible.

Web Rankings

We hear all the time about things that are "trending," or "blowing up" or "going viral." Generally, people think about this as an organic process. What they might overlook, at first glance, is that almost all of this activity is happening on a handful of websites: Google, Reddit, Twitter, Tumblr, and Facebook. Most of these websites use variations on a machine learning algorithm to determine what you do and do not see, and those algorithms have a powerful effect on which stories "go viral", and which stories don't.

For most of these sites, the algorithms they use to rank content are proprietary -- a trade secret.

In the case of Reddit, the algorithm used to control which posts make it to the front page are heinously complicated, in an extremely unsuccessful attempt to make it more difficult to game. The same goes for Twitter and Google. All of this is a little alarming, because this stuff can matter a lot.

According to psychologist Roger Epstein, Google's choice of pagerank algorithm could single-handedly determine the outcome of more than a quarter of worldwide presidential elections. That's a lot of power in the hands of a piece of software.

Learn to Love the Algorithms

The lesson to take away from all this isn't panic. We've been ceding power to the robots for a while now -- and, with a few exceptions, the world still seems to be going pretty well. There's little cause to stock up on canned food and shotguns just yet.

However, it does pay to be aware of the degree to which these algorithms influence your life. Whose interests are they representing? Are your choices as free as they feel?

What do you think? Is this software creepy? Interesting? Let us know in the comments!

Image Credits: Marionette pose via Shutterstock, robot arm via Shutterstock