Microsoft Excel is excellent at so many day-to-day tasks. But now and then, you encounter one of its shortcomings: the size of a spreadsheet. We've covered how to make an Excel spreadsheet smaller or split a large CSV file into multiple files, and have several methods for you to choose from.

Why Split a Large CSV Into Multiple Files?

You might wonder, "Why would I need to split a large Excel file into multiple smaller files?" It's an interesting question, especially as Excel has a spreadsheet row limit of 1,048,576.

Over one million rows sounds phenomenal. However, hitting the row limit is easier than you think, especially during certain tasks. For example, if you're marketing via email, you might import a CSV file (What Is a CSV File?) containing millions of email addresses. The only problem is, how do you manage a spreadsheet with that many addresses in? Furthermore, what if someone sends you a CSV that is already over the limit (from another program)?

If that sounds like an issue you're likely to face, check out the following five ways to split a large CSV or Excel file into multiple smaller files.

Don't have a large CSV file handy but want to play along at home? I'm using the COVID-19 Open Research Dataset in the examples, which you can download and use too.

1. Break Up CSV Files Using a Program

There are several useful CSV splitter programs out there. Here are two of the best. Fair warning, though, as these programs work, they sometimes run into memory issues, a common problem for CSV-splitting programs.

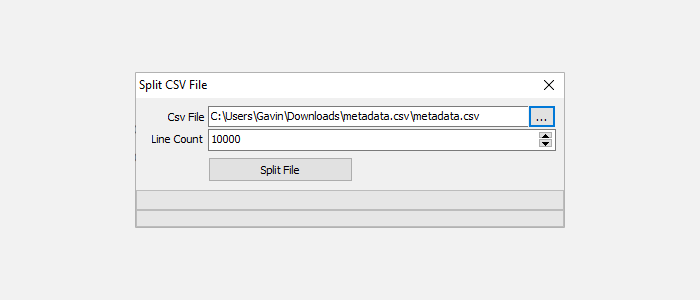

Free Huge CSV Splitter

The Free Huge CSV Splitter is a basic CSV splitting tool. You input the CSV file you want to split, the line count you want to use, and then select Split File. The line count determines the number of output files you end up with.

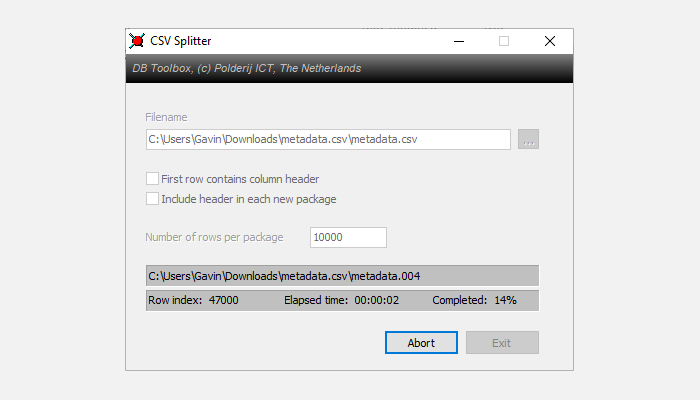

CSV Splitter

CSV Splitter is the second tool. It offers much the same functionality as Free Huge CSV Splitter, albeit with a slightly sleeker design. It splits your CSV into small chunks rapidly, allowing you to determine the line count you want to use.

2. Use a Batch File

Next up, create a programmable batch file. You can use a batch file to process the CSV into smaller chunks, customizing the file to deliver different chunks.

Open a new text document, then copy and paste the following:

@echo off

setlocal ENABLEDELAYEDEXPANSION

REM Edit this value to change the name of the file that needs splitting. Include the extension.

SET BFN=HCAHPSHospital.csv

REM Edit this value to change the number of lines per file.

SET LPF=2500

REM Edit this value to change the name of each short file. It will be followed by a number indicating where it is in the list.

SET SFN=HosptialSplitFile

REM Do not change beyond this line.

SET SFX=%BFN:~-3%

SET /A LineNum=0

SET /A FileNum=1

For /F "delims==" %%l in (%BFN%) Do (

SET /A LineNum+=1

echo %%l >> %SFN%!FileNum!.%SFX%

if !LineNum! EQU !LPF! (

SET /A LineNum=0

SET /A FileNum+=1

)

)

endlocal

Pause

You'll need to configure the batch file before running. I'll tell you what each command does, and you can alter it to suit the size of your batch file as well as the required output.

- "SET BFN=" should point to the CSV you need to break down

- "SET LPF=" is the number of rows you'd like to limit your new file to

- "SET SFN=" is the new naming scheme for your split files

Once you've entered your variables, head to File > Save As. Choose a filename, and select Save. Then, select your newly saved text file and press F2 to rename it. Replace the .txt extension with .bat and press OK when the warning appears. Now, you can split your large CSV file into smaller output files.

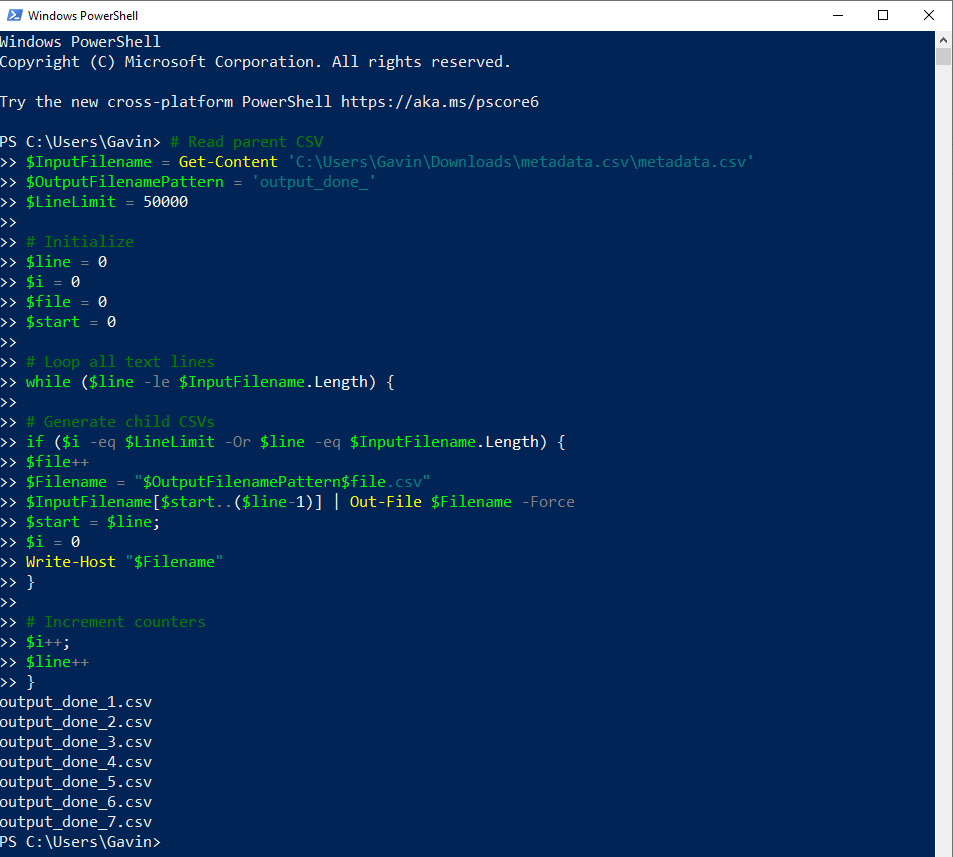

3. Use a PowerShell Script to Break Up a CSV File

You can use batch files for a wide range of day-to-day tasks. But PowerShell scripts are faster, especially for this type of processing and division.

The following script quickly cuts your large CSV into smaller files.

First up, press CTRL + X to open the Windows Power Menu, then select PowerShell. If PowerShell isn't an option, input powershell in your Start Menu search bar and select the Best Match.

Now, copy and paste the following script:

$InputFilename = Get-Content 'C:\file\location'

$OutputFilenamePattern = 'output_done_'

$LineLimit = 50000

$line = 0

$i = 0

$file = 0

$start = 0

while ($line -le $InputFilename.Length) {

if ($i -eq $LineLimit -Or $line -eq $InputFilename.Length) {

$file++

$Filename = "$OutputFilenamePattern$file.csv"

$InputFilename[$start..($line-1)] | Out-File $Filename -Force

$start = $line;

$i = 0

Write-Host "$Filename"

}

$i++;

$line++

}

Replace the file location in the first line with your CSV file, then run the script. The script outputs the smaller CSV files in your user directory. For example, my CSV files are found at C:\Users\Gavin with the file name output_done_1.csv. You can change the output name by altering the $OutputFilenamePattern = 'output_done_' line.

You can find the original script at SPJeff.

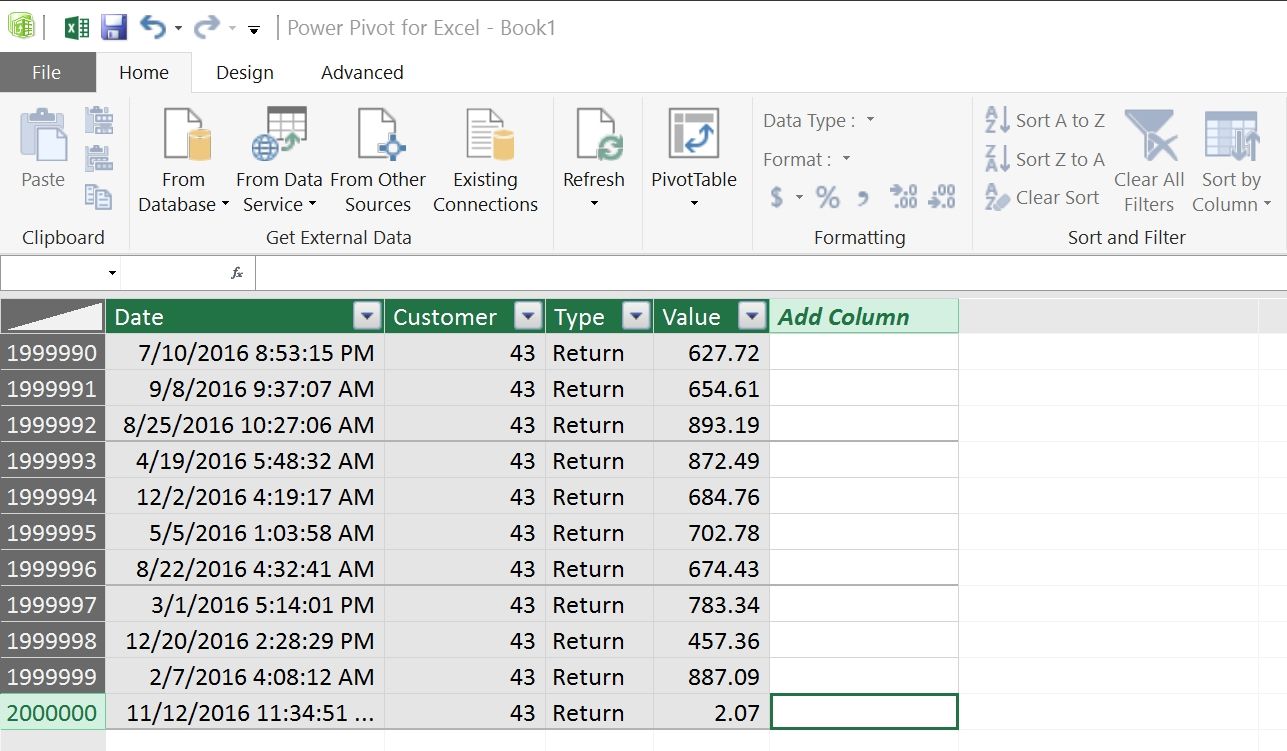

4. Break Up a Large CSV Using Power Pivot

Your penultimate solution to breaking up a large CSV file into small bits doesn't actually break it down. Rather, it lets you load your massive CSV file into Excel and use the Power Pivot tool to open it. That's right; you can effectively ignore the Excel line limit and manage the file within the program.

You achieve this by creating a data link to the CSV file, then using Power Pivot to manage the contents. For a full explanation and tutorial, read Jose Barreto's blog detailing the process.

In short, Barreto creates a Pivot Table using "up to 8.5 million rows with no problem at all." The above image comes from the blog post, showing a total of 2 million rows in use in Excel.

Remember, this process doesn't split the CSV into small chunks. However, it does mean you can manipulate the CSV in Excel, which is a very handy alternative. If you need more tips, find out how to use a Pivot Table for data analysis.

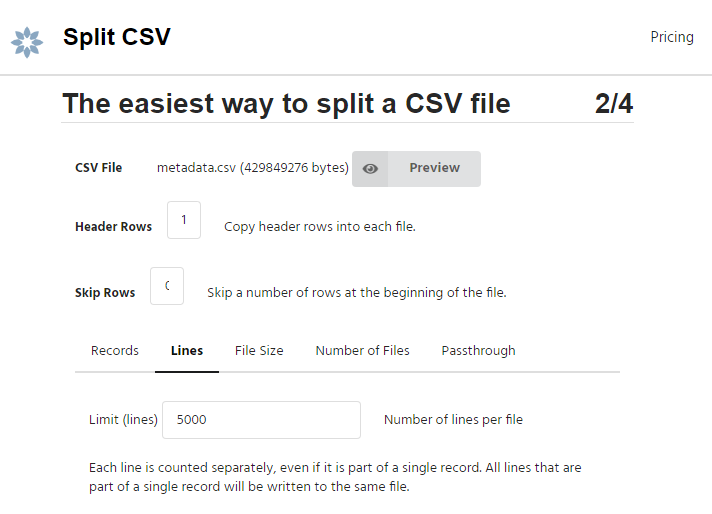

5. Break Up Large CSV Online Using Split CSV

There are also online services that break your big CSV file into smaller bits. One such option is Split CSV, a free online CSV splitter.

Split CSV managed the COVID-19 dataset fine, splitting it into handy chunks. As with other tools, you define the line count for each file and let it split it up. However, I didn't have a large CSV file to hand to test with, and, as such, your experience may vary.

Split CSV includes premium options, too. For a subscription fee, you can use a custom delimiter, choose a selection of output file types, remove certain characters from the output files, and delete duplicate lines.

Break Down Your CSV Files Into Easily Manageable Chunks

You now have five solutions for breaking your CSV files into small bits, making them easy to manage. The solutions vary in speed and the size of the CSV files they can manage, so you may have to experiment to find the solution that works best for you. And the best part of all? These CSV splitting techniques work on Windows 10 and Windows 11. You can even use the online CSV splitting tool on macOS and Linux!